9 Model Optimizations

Resources: Slides, Videos, Exercises, Labs

When machine learning models are deployed on systems, especially on resource-constrained embedded systems, the optimization of models is a necessity. While machine learning inherently often demands substantial computational resources, the systems are inherently limited in memory, processing power, and energy. This chapter will dive into the art and science of optimizing machine learning models to ensure they are lightweight, efficient, and effective when deployed in TinyML scenarios.

Learn techniques like pruning, knowledge distillation and specialized model architectures to represent models more efficiently

Understand quantization methods to reduce model size and enable faster inference through reduced precision numerics

Explore hardware-aware optimization approaches to match models to target device capabilities

Develop holistic thinking to balance tradeoffs in model complexity, accuracy, latency, power etc. based on application requirements

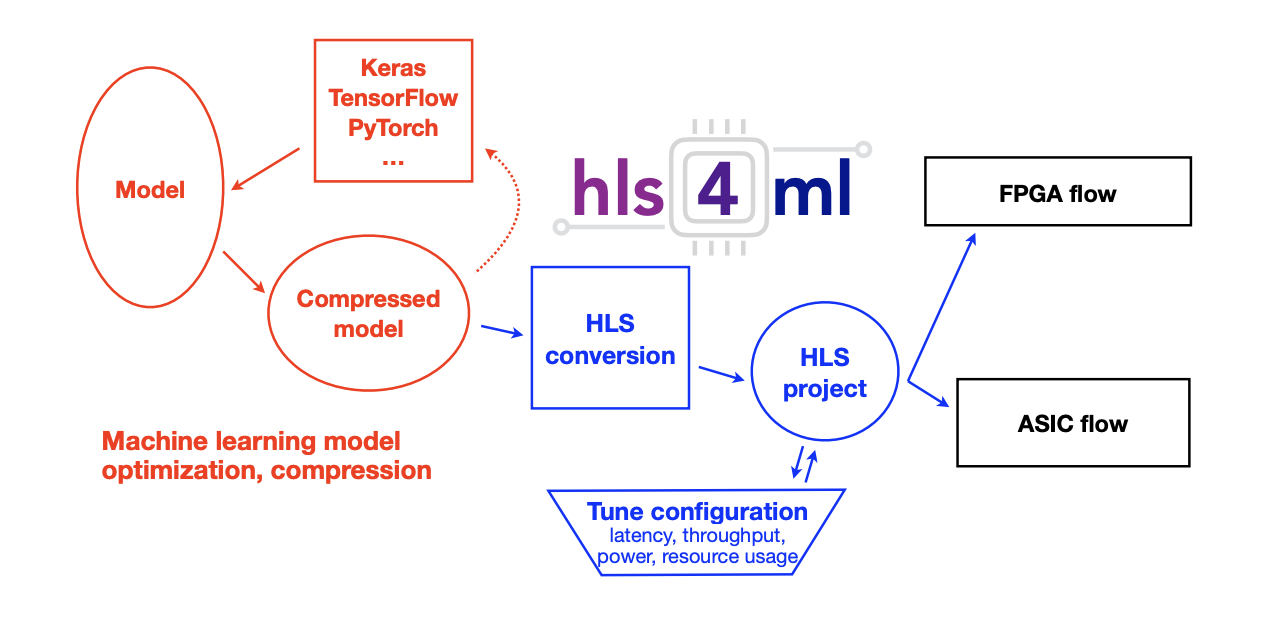

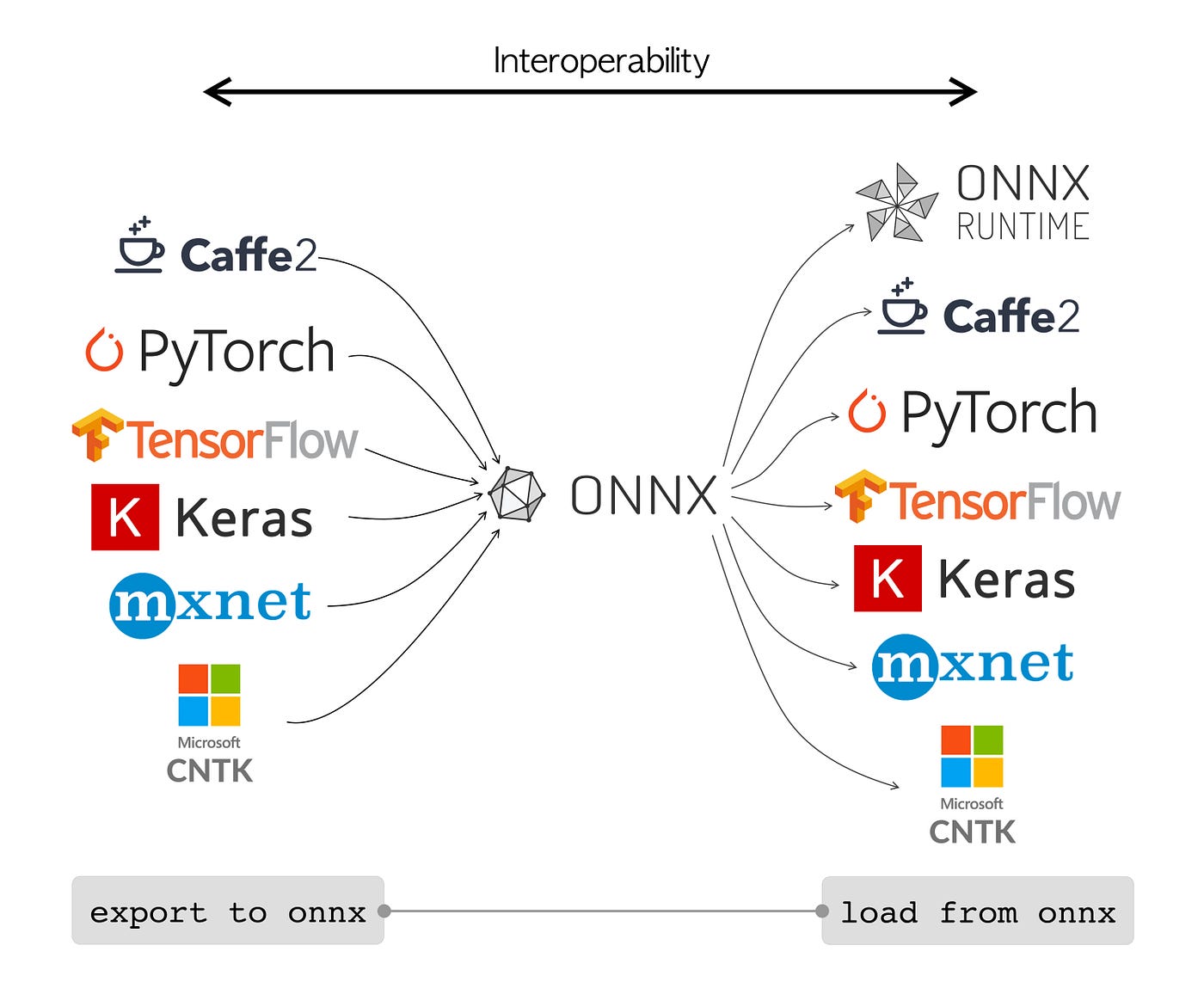

Discover software tools like frameworks and model conversion platforms that enable deployment of optimized models

Gain strategic insight into selecting and applying model optimizations based on use case constraints and hardware targets

9.1 Introduction

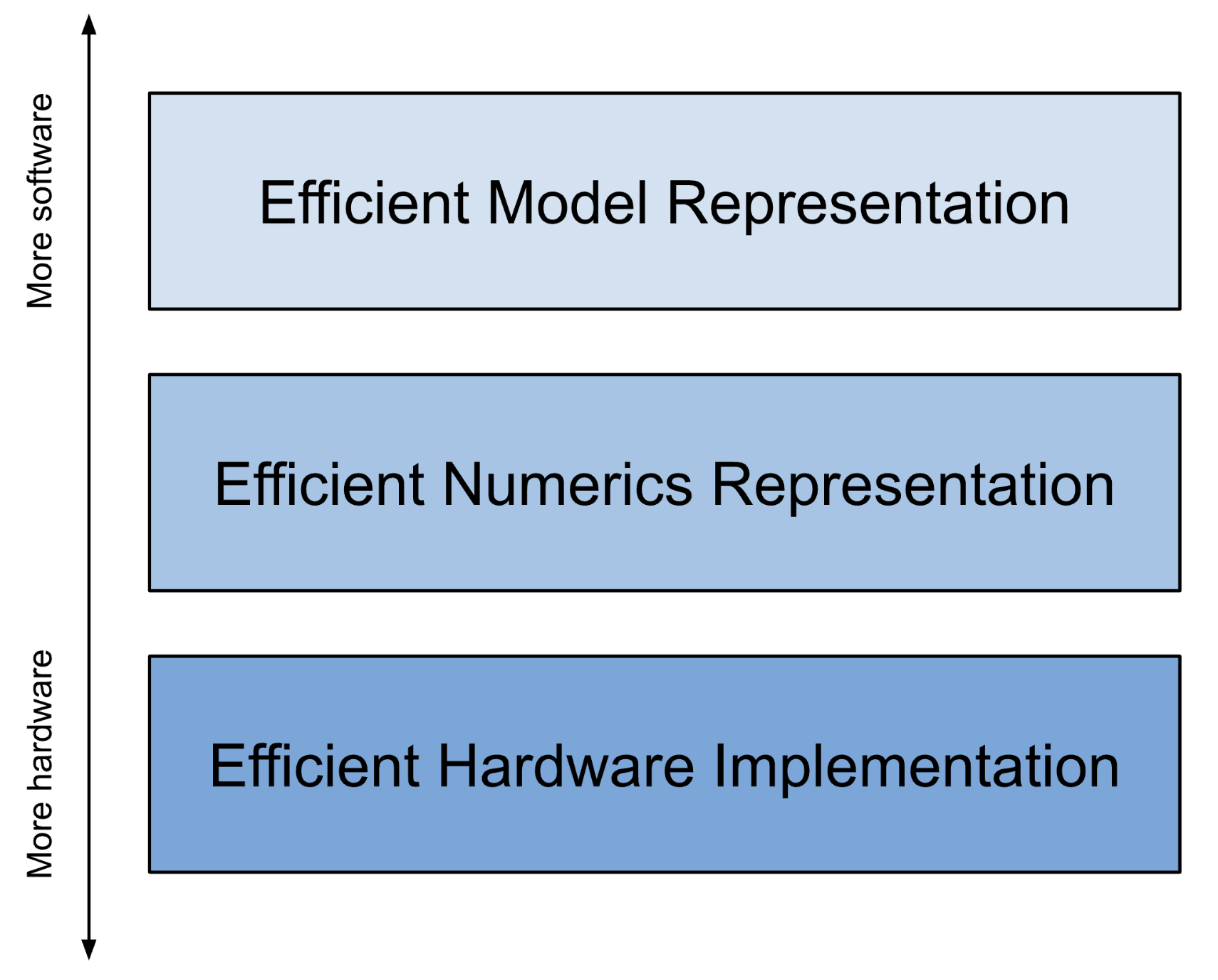

We have structured this chapter in three tiers. First, in Section 9.2 we examine the significance and methodologies of reducing the parameter complexity of models without compromising their inference capabilities. Techniques such as pruning and knowledge distillation are discussed, offering insights into how models can be compressed and simplified while maintaining, or even enhancing, their performance.

Going one level lower, in Section 9.3, we study the role of numerical precision in model computations and how altering it impacts model size, speed, and accuracy. We will examine the various numerical formats and how reduced-precision arithmetic can be leveraged to optimize models for embedded deployment.

Finally, as we go lower and closer to the hardware, in Section 9.4, we will navigate through the landscape of hardware-software co-design, exploring how models can be optimized by tailoring them to the specific characteristics and capabilities of the target hardware. We will discuss how models can be adapted to exploit the available hardware resources effectively.

9.2 Efficient Model Representation

The first avenue of attack for model optimization starts in familiar territory for most ML practitioners: efficient model representation is often first tackled at the highest level of parametrization abstraction - the model’s architecture itself.

Most traditional ML practitioners design models with a general high-level objective in mind, whether it be image classification, person detection, or keyword spotting as mentioned previously in this textbook. Their designs generally end up naturally fitting into some soft constraints due to limited compute resources during development, but generally these designs are not aware of later constraints, such as those required if the model is to be deployed on a more constrained device instead of the cloud.

In this section, we’ll discuss how practitioners can harness principles of hardware-software co-design even at a model’s high level architecture to make their models compatible with edge devices. From most to least hardware aware at this level of modification, we discuss several of the most common strategies for efficient model parametrization: pruning, model compression, and edge-friendly model architectures. You were introduced to pruning and model compression in Section 8.4; now, this section will go one step beyond the definitions to provide you with a technical understanding of how these techniques work.

9.2.1 Pruning

Overview

Model pruning is a technique in machine learning that reduces the size and complexity of a neural network model while maintaining its predictive capabilities as much as possible. The goal of model pruning is to remove redundant or non-essential components of the model, including connections between neurons, individual neurons, or even entire layers of the network.

This process typically involves analyzing the machine learning model to identify and remove weights, nodes, or layers that have little impact on the model’s outputs. By selectively pruning a model in this way, the total number of parameters can be reduced significantly without substantial declines in model accuracy. The resulting compressed model requires less memory and computational resources to train and run while enabling faster inference times.

Model pruning is especially useful when deploying machine learning models to devices with limited compute resources, such as mobile phones or TinyML systems. The technique facilitates the deployment of larger, more complex models on these devices by reducing their resource demands. Additionally, smaller models require less data to generalize well and are less prone to overfitting. By providing an efficient way to simplify models, model pruning has become a vital technique for optimizing neural networks in machine learning.

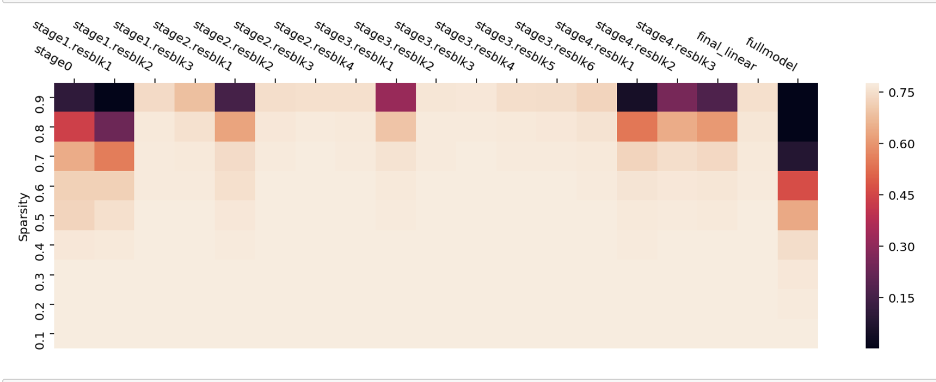

There are several common pruning techniques used in machine learning, these include structured pruning, unstructured pruning, iterative pruning, bayesian pruning, and even random pruning. In addition to pruning the weights, one can also prune the activations. Activation pruning specifically targets neurons or filters that activate rarely or have overall low activation. There are numerous other methods, such as sensitivity and movement pruning. For a comprehensive list of methods, the reader is encouraged to read the following paper: “A Survey on Deep Neural Network Pruning: Taxonomy, Comparison, Analysis, and Recommendations” (2023).

So how does one choose the type of pruning methods? Many variations of pruning techniques exist where each varies the heuristic of what should be kept and pruned from the model as well as number of times pruning occurs. Traditionally, pruning happens after the model is fully trained, where the pruned model may experience mild accuracy loss. However, as we will discuss further, recent discoveries have found that pruning can be used during training (i.e., iteratively) to identify more efficient and accurate model representations.

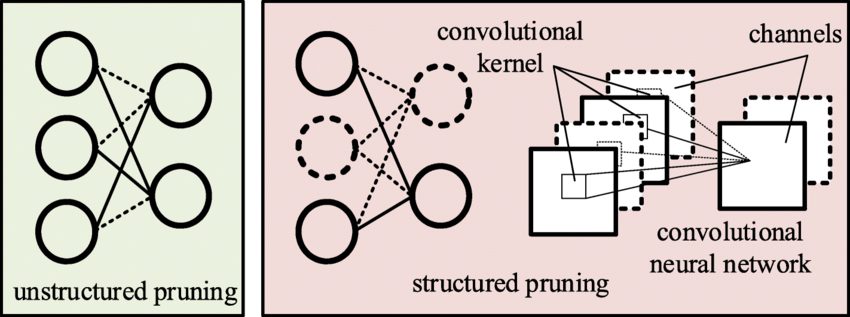

Structured Pruning

We start with structured pruning, a technique that reduces the size of a neural network by eliminating entire model-specific substructures while maintaining the overall model structure. It removes entire neurons/channels or layers based on importance criteria. For example, for a convolutional neural network (CNN), this could be certain filter instances or channels. For fully connected networks, this could be neurons themselves while maintaining full connectivity or even be elimination of entire model layers that are deemed to be insignificant. This type of pruning often leads to regular, structured sparse networks that are hardware friendly.

Best practices have started to emerge on how to think about structured pruning. There are three main components:

1. Structures to Target for Pruning

Given the variety of approaches, different structures within a neural network are pruned based on specific criteria. The primary structures for pruning include neurons, channels, and sometimes entire layers, each with its unique implications and methodologies. The goal in each approach is to ensure that the reduced model retains as much of the original model’s predictive prowess as possible while improving computational efficiency and reducing size.

When neurons are pruned, we are removing entire neurons along with their associated weights and biases, thereby reducing the width of the layer. This type of pruning is often utilized in fully connected layers.

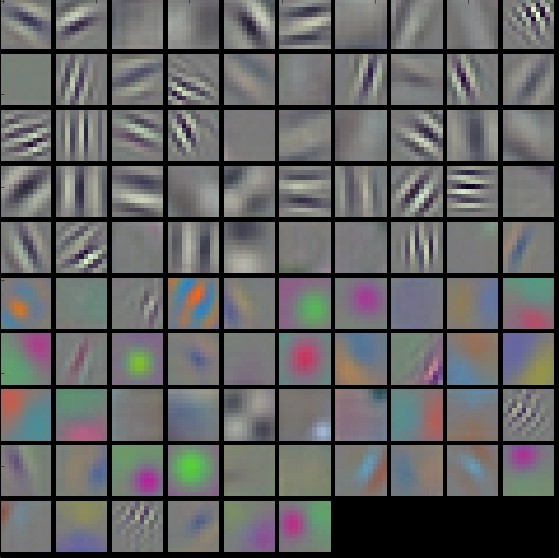

With channel pruning, which is predominantly applied in convolutional neural networks (CNNs), it involves eliminating entire channels or filters, which in turn reduces the depth of the feature maps and impacts the network’s ability to extract certain features from the input data. This is particularly crucial in image processing tasks where computational efficiency is paramount.

Finally, layer pruning takes a more aggressive approach by removing entire layers of the network. This significantly reduces the network’s depth and thereby its capacity to model complex patterns and hierarchies in the data. This approach necessitates a careful balance to ensure that the model’s predictive capability is not unduly compromised.

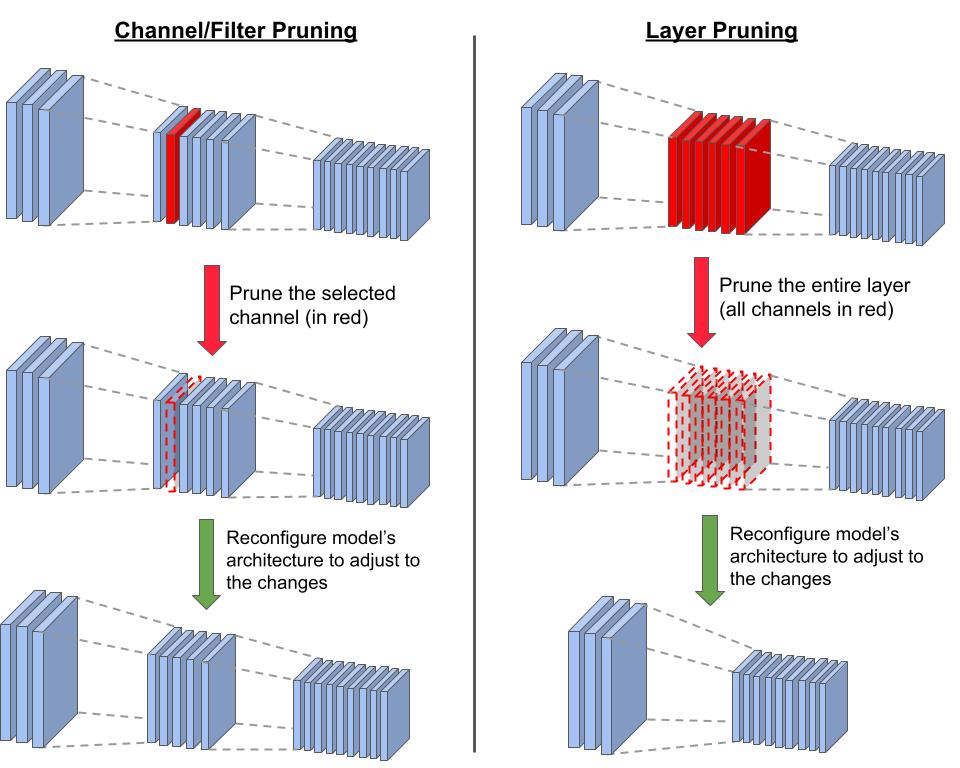

Figure 9.2 demonstrates the difference between channel/filter wise pruning and layer pruning. When we prune a channel, we have to reconfigure the model’s architecture in order to adapt to the structural changes. One adjustment is changing the number of input channels in the subsequent layer (here, the third and deepest layer): changing the depths of the filters that are applied to the layer with the pruned channel. On the other hand, pruning an entire layer (removing all the channels in the layer) requires more drastic adjustments. The main one involves modifying the connections between the remaining layers to replace or bypass the pruned layer. In our case, we reconfigure to connect the first and last layers. In all pruning cases, we have to fine-tune the new structure to adjust the weights.

2. Establishing a Criteria for Pruning

Establishing well-defined criteria for determining which specific structures to prune from a neural network model is a crucial component of the model pruning process. The core goal here is to identify and remove components that contribute the least to the model’s predictive capabilities, while retaining structures integral to preserving the model’s accuracy.

A widely adopted and effective strategy for systematically pruning structures relies on computing importance scores for individual components like neurons, filters, channels or layers. These scores serve as quantitative metrics to gauge the significance of each structure and its effect on the model’s output.

There are several techniques for assigning these importance scores:

- Weight Magnitude-Based Pruning: This approach assigns importance scores to a structure by evaluating the aggregate magnitude of their associated weights. Structures with smaller overall weight magnitudes are considered less critical to the network’s performance.

- Gradient-Based Pruning: This technique utilizes the gradients of the loss function with respect to the weights associated with a structure. Structures with low cumulative gradient magnitudes, indicating minimal impact on the loss when altered, are prime candidates for pruning.

- Activation-Based Pruning: This method tracks how often a neuron or filter is activated by storing this information in a parameter called the activation counter. Each time the structure is activated, the counter is incremented. A low activation count suggests that the structure is less relevant.

- Taylor Expansion-Based Pruning: This approach approximates the change in the loss function from removing a given weight. By assessing the cumulative loss disturbance from removing all the weights associated with a structure, you can identify structures with negligible impact on the loss, making them suitable candidates for pruning.

The idea is to measure, either directly or indirectly, the contribution of each component to the model’s output. Structures with minimal influence according to the defined criteria are pruned first. This enables selective, optimized pruning that maximally compresses models while preserving predictive capacity. In general, it is important to evaluate the impact of removing particular structures on the model’s output, with recent works such as (Rachwan et al. 2022) and (Lubana and Dick 2020) investigating combinations of techniques like magnitude-based pruning and gradient-based pruning.

3. Selecting a pruning strategy

Now that you understand some techniques for determining the importance of structures within a neural network, the next step is to decide how to apply these insights. This involves selecting an appropriate pruning strategy, which dictates how and when the identified structures are removed and how the model is fine-tuned to maintain its performance. Two main structured pruning strategies exist: iterative pruning and one-shot pruning.

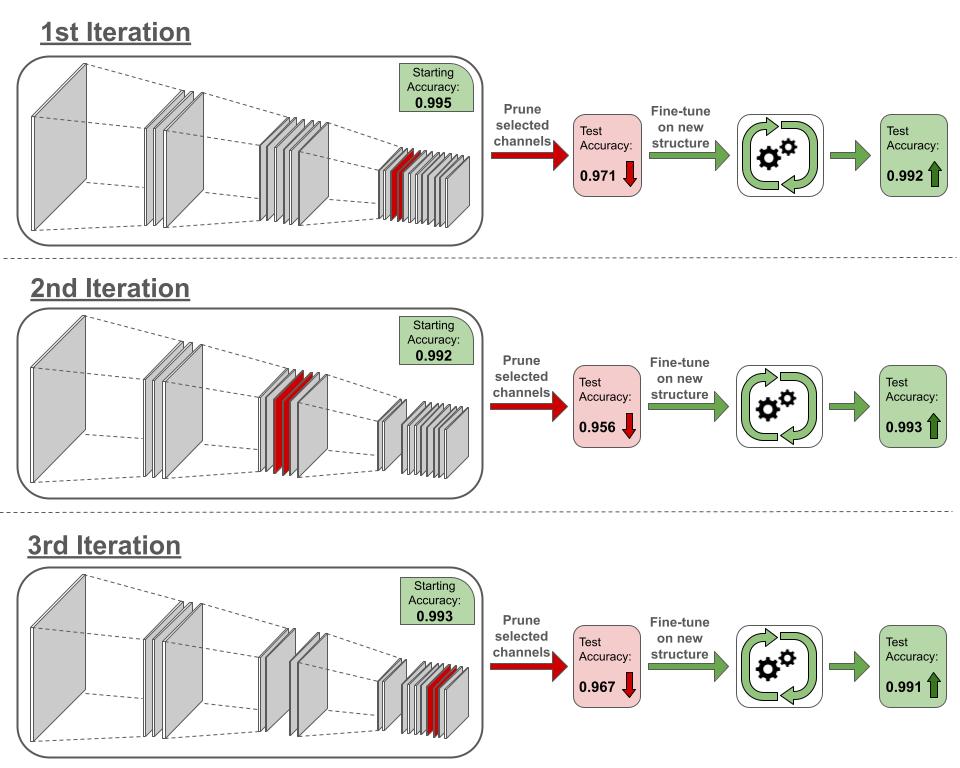

Iterative pruning gradually removes structures across multiple cycles of pruning followed by fine-tuning. In each cycle, a small set of structures are pruned based on importance criteria. The model is then fine-tuned, allowing it to adjust smoothly to the structural changes before the next pruning iteration. This gradual, cyclic approach prevents abrupt accuracy drops. It allows the model to slowly adapt as structures are reduced across iterations.

Consider a situation where we wish to prune the 6 least effective channels (based on some specific criteria) from a convolutional neural network. In Figure 9.3, we show a simplified pruning process carried over 3 iterations. In every iteration, we only prune 2 channels. Removing the channels results in accuracy degradation. In the first iteration, the accuracy drops from 0.995 to 0.971. However, after we fine-tune the model on the new structure, we are able to recover from the performance loss, bringing the accuracy up to 0.992. Since the structural changes are minor and gradual, the network can more easily adapt to them. Running the same process 2 more times, we end up with a final accuracy of 0.991 (a loss of only 0.4% from the original) and 27% decrease in the number of channels. Thus, iterative pruning enables us to maintain performance while benefiting from increased computational efficiency due to the decreased model size.

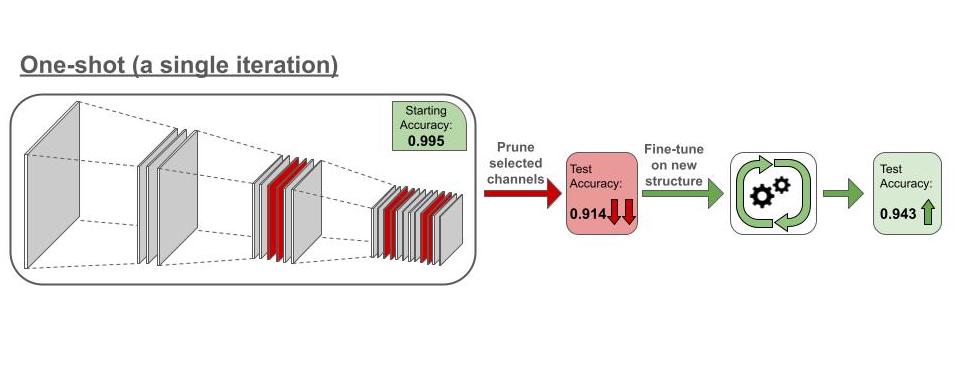

One-shot pruning takes a more aggressive approach by pruning a large portion of structures simultaneously in one shot based on predefined importance criteria. This is followed by extensive fine-tuning to recover model accuracy. While faster, this aggressive strategy can degrade accuracy if the model cannot recover during fine-tuning.

The choice between these strategies involves weighing factors like model size, target sparsity level, available compute and acceptable accuracy losses. One-shot pruning can rapidly compress models, but iterative pruning may enable better accuracy retention for a target level of pruning. In practice, the strategy is tailored based on use case constraints. The overarching aim is to generate an optimal strategy that removes redundancy, achieves efficiency gains through pruning, and finely tunes the model to stabilize accuracy at an acceptable level for deployment.

Now consider the same network we had in the iterative pruning example. Whereas in the iterative process we pruned 2 channels at a time, in the one-shot pruning we would prune the 6 channels at once (Figure 9.4). Removing 27% of the network’s channel simultaneously alters the structure significantly, causing the accuracy to drop from 0.995 to 0.914. Given the major changes, the network is not able to properly adapt during fine-tuning, and the accuracy went up to 0.943, a 5% degradation from the accuracy of the unpruned network. While the final structures in both iterative pruning and oneshot pruning processes are identical, the former is able to maintain high performance while the latter suffers significant degradations.

Advantages of Structured Pruning

Structured pruning brings forth a myriad of advantages that cater to various facets of model deployment and utilization, especially in environments where computational resources are constrained.

Computational Efficiency: By eliminating entire structures, such as neurons or channels, structured pruning significantly diminishes the computational load during both training and inference phases, thereby enabling faster model predictions and training convergence. Moreover, the removal of structures inherently reduces the model’s memory footprint, ensuring that it demands less storage and memory during operation, which is particularly beneficial in memory-constrained environments like TinyML systems.

Hardware Efficiency: Structured pruning often results in models that are more amenable to deployment on specialized hardware, such as Field-Programmable Gate Arrays (FPGAs) or Application-Specific Integrated Circuits (ASICs), due to the regularity and simplicity of the pruned architecture. With reduced computational requirements, it translates to lower energy consumption, which is crucial for battery-powered devices and sustainable computing practices.

Maintenance and Deployment: The pruned model, while smaller, retains its original architectural form, which can simplify the deployment pipeline and ensure compatibility with existing systems and frameworks. Also, with fewer parameters and simpler structures, the pruned model becomes easier to manage and monitor in production environments, potentially reducing the overhead associated with model maintenance and updates. Later on, when we dive into MLOps, this need will become apparent.

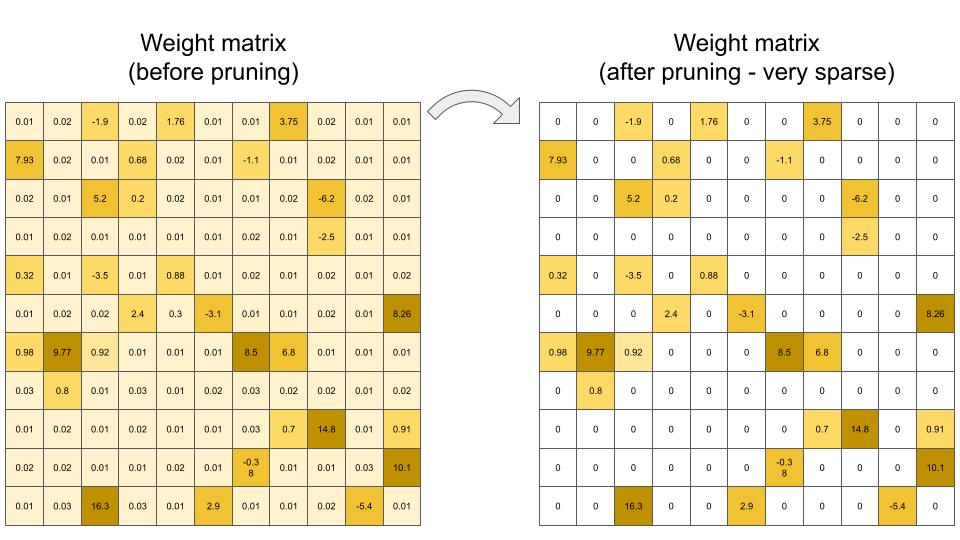

Unstructured Pruning

Unstructured pruning is, as its name suggests, pruning the model without regard to model-specific substructure. As mentioned above, it offers a greater aggression in pruning and can achieve higher model sparsities while maintaining accuracy given less constraints on what can and can’t be pruned. Generally, post-training unstructured pruning consists of an importance criterion for individual model parameters/weights, pruning/removal of weights that fall below the criteria, and optional fine-tuning after to try and recover the accuracy lost during weight removal.

Unstructured pruning has some advantages over structured pruning: removing individual weights instead of entire model substructures often leads in practice to lower model accuracy decreases. Furthermore, generally determining the criterion of importance for an individual weight is much simpler than for an entire substructure of parameters in structured pruning, making the former preferable for cases where that overhead is hard or unclear to compute. Similarly, the actual process of structured pruning is generally less flexible, as removing individual weights is generally simpler than removing entire substructures and ensuring the model still works.

Unstructured pruning, while offering the potential for significant model size reduction and enhanced deployability, brings with it challenges related to managing sparse representations and ensuring computational efficiency. It is particularly useful in scenarios where achieving the highest possible model compression is paramount and where the deployment environment can handle sparse computations efficiently.

Table 9.1 provides a concise comparison between structured and unstructured pruning. In this table, aspects related to the nature and architecture of the pruned model (Definition, Model Regularity, and Compression Level) are grouped together, followed by aspects related to computational considerations (Computational Efficiency and Hardware Compatibility), and ending with aspects related to the implementation and adaptation of the pruned model (Implementation Complexity and Fine-Tuning Complexity). Both pruning strategies offer unique advantages and challenges, as shown in Table 9.1, and the selection between them should be influenced by specific project and deployment requirements.

| Aspect | Structured Pruning | Unstructured Pruning |

|---|---|---|

| Definition | Pruning entire structures (e.g., neurons, channels, layers) within the network | Pruning individual weights or neurons, resulting in sparse matrices or non-regular network structures |

| Model Regularity | Maintains a regular, structured network architecture | Results in irregular, sparse network architectures |

| Compression Level | May offer limited model compression compared to unstructured pruning | Can achieve higher model compression due to fine-grained pruning |

| Computational Efficiency | Typically more computationally efficient due to maintaining regular structures | Can be computationally inefficient due to sparse weight matrices, unless specialized hardware/software is used |

| Hardware Compatibility | Generally better compatible with various hardware due to regular structures | May require hardware that efficiently handles sparse computations to realize benefits |

| Implementation Complexity | Often simpler to implement and manage due to maintaining network structure | Can be complex to manage and compute due to sparse representations |

| Fine-Tuning Complexity | May require less complex fine-tuning strategies post-pruning | Might necessitate more complex retraining or fine-tuning strategies post-pruning |

In Figure 9.5 we have examples that illustrate the differences between unstructured and structured pruning. Observe that unstructured pruning can lead to models that no longer obey high-level structural guarantees of their original unpruned counterparts: the left network is no longer a fully connected network after pruning. Structured pruning on the other hand maintains those invariants: in the middle, the fully connected network is pruned in a way that the pruned network is still fully connected; likewise, the CNN maintains its convolutional structure, albeit with fewer filters.

Lottery Ticket Hypothesis

Pruning has evolved from a purely post-training technique that came at the cost of some accuracy, to a powerful meta-learning approach applied during training to reduce model complexity. This advancement in turn improves compute, memory, and latency efficiency at both training and inference.

A breakthrough finding that catalyzed this evolution was the lottery ticket hypothesis by Frankle and Carbin (2019). Their work states that within dense neural networks, there exist sparse subnetworks, referred to as “winning tickets,” that can match or even exceed the performance of the original model when trained in isolation. Specifically, these winning tickets, when initialized using the same weights as the original network, can achieve similarly high training convergence and accuracy on a given task. It is worthwhile pointing out that they empirically discovered the lottery ticket hypothesis, which was later formalized.

The intuition behind this hypothesis is that, during the training process of a neural network, many neurons and connections become redundant or unimportant, particularly with the inclusion of training techniques encouraging redundancy like dropout. Identifying, pruning out, and initializing these “winning tickets’’ allows for faster training and more efficient models, as they contain the essential model decision information for the task. Furthermore, as generally known with the bias-variance tradeoff theory, these tickets suffer less from overparameterization and thus generalize better rather than overfitting to the task.

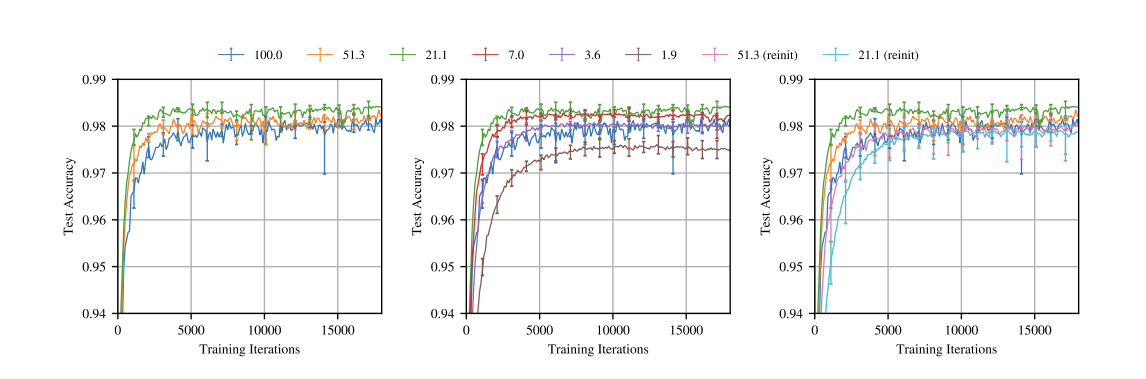

In Figure 9.6 we have an example experiment showing pruning and training experiments on a fully connected LeNet over a variety of pruning ratios. In the left plot, notice how heavy pruning reveals a more efficient subnetwork (in green) that is 21.1% the size of the original network (in blue), The subnetwork achieves higher accuracy and in a faster manner than the unpruned version (green line is above the blue line). However, pruning has a limit (sweet spot), and further pruning will produce performance degradations and eventually drop below the unpruned version’s performance (notice how the red, purple, and brown subnetworks gradually drop in accuracy performance) due to the significant loss in the number of parameters.

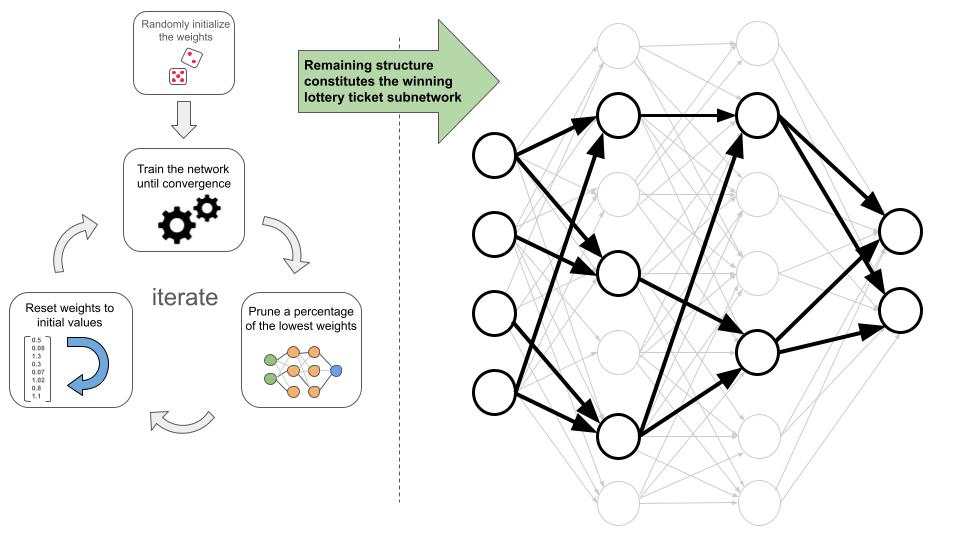

To uncover these winning lottery tickets within a neural network, a systematic process is followed. This process, which is illustrated in Figure 9.7 (left side), involves iteratively training, pruning, and reinitializing the network. The steps below outline this approach:

Initialize the network’s weights to random values.

Train the network until it converges to the desired performance.

Prune out some percentage of the edges with the lowest weight values.

Reinitialize the network with the same random values from step 1.

Repeat steps 2-4 for a number of times, or as long as the accuracy doesn’t significantly degrade.

When we finish, we are left with a pruned network (Figure 9.7 right side), which is a subnetwork of the one we start with. The subnetwork should have a significantly smaller structure, while maintaining a comparable level of accuracy.

Challenges & Limitations

There is no free lunch with pruning optimizations, with some choices coming with both improvements and costs to considers. Below we discuss some tradeoffs for practitioners to consider.

Managing Sparse Weight Matrices: A sparse weight matrix is a matrix in which many of the elements are zero. Unstructured pruning often results in sparse weight matrices, where many weights are pruned to zero. While this reduces model size, it also introduces several challenges. Computational inefficiency can arise because standard hardware is optimized for dense matrix operations. Without optimizations that take advantage of sparsity, the computational savings from pruning can be lost. Although sparse matrices can be stored without specialized formats, effectively leveraging their sparsity requires careful handling to avoid wasting resources. Algorithmically, navigating sparse structures requires efficiently skipping over zero entries, which adds complexity to the computation and model updates.

Quality vs. Size Reduction: A key challenge in both structured and unstructured pruning is balancing size reduction with maintaining or improving predictive performance. Establishing robust pruning criteria, whether for removing entire structures (structured pruning) or individual weights (unstructured pruning), is essential. These pruning criteria chosen must accurately identify elements whose removal minimally impacts performance. Careful experimentation is often needed to ensure the pruned model remains efficient while maintaining its predictive performance.

Fine-Tuning and Retraining: Post-pruning fine-tuning is imperative in both structured and unstructured pruning to recover lost performance and stabilize the model. The challenge encompasses determining the extent, duration, and nature of the fine-tuning process, which can be influenced by the pruning method and the degree of pruning applied.

Hardware Compatibility and Efficiency: Especially pertinent to unstructured pruning, hardware compatibility and efficiency become critical. Unstructured pruning often results in sparse weight matrices, which may not be efficiently handled by certain hardware, potentially negating the computational benefits of pruning (see Figure 9.8). Ensuring that pruned models, particularly those resulting from unstructured pruning, are scalable, compatible, and efficient on the target hardware is a significant consideration.

Legal and Ethical Considerations: Last but not least, adherence to legal and ethical guidelines is important, especially in domains with significant consequences. Pruning methods must undergo rigorous validation, testing, and potentially certification processes to ensure compliance with relevant regulations and standards, though arguably at this time no such formal standards and best practices exist that are vetted and validated by 3rd party entities. This is particularly crucial in high-stakes applications like medical AI and autonomous driving, where quality drops due to pruning-like optimizations can be life-threatening. Moreover, ethical considerations extend beyond safety to fairness and equality; recent work by (Tran et al. 2022) has revealed that pruning can disproportionately impact people of color, underscoring the need for comprehensive ethical evaluation in the pruning process.

Imagine your neural network is a giant, overgrown bush. Pruning is like strategically trimming away branches to make it stronger and more efficient! In the Colab, you’ll learn how to do this trimming in TensorFlow. Understanding these concepts will give you the foundation to see how pruning makes models small enough to run on your phone!

9.2.2 Model Compression

Model compression techniques are crucial for deploying deep learning models on resource-constrained devices. These techniques aim to create smaller, more efficient models that preserve the predictive performance of the original models.

Knowledge Distillation

One popular technique is knowledge distillation (KD), which transfers knowledge from a large, complex “teacher” model to a smaller “student” model. The key idea is to train the student model to mimic the teacher’s outputs. The concept of KD was first popularized by Hinton (2005).

Overview and Benefits

Knowledge distillation involves transferring knowledge from a large, complex teacher model to a smaller student model. The core idea is to use the teacher’s outputs, known as soft targets, to guide the training of the student model. Unlike traditional “hard targets” (the true labels), soft targets are the probability distributions over classes that the teacher model predicts. These distributions provide richer information about the relationships between classes, which can help the student model learn more effectively.

You have learned that the softmax function converts a model’s raw outputs into a probability distribution over classes. A key technique in KD is temperature scaling, which is applied to the softmax function of the teacher model’s outputs. By introducing a temperature parameter, the distribution can be adjusted: a higher temperature produces softer probabilities, meaning the differences between class probabilities become less extreme. This softening effect results in a more uniform distribution, where the model’s confidence in the most likely class is reduced, and other classes have higher, non-zero probabilities. This is valuable for the student model because it allows it to learn not just from the most likely class but from the relative probabilities of all classes, capturing subtle patterns that might be missed if trained only on hard targets. Thus, temperature scaling facilitates the transfer of more nuanced knowledge from the teacher to the student model.

The loss function in knowledge distillation typically combines two components: a distillation loss and a classification loss. The distillation loss, often calculated using Kullback-Leibler (KL) divergence, measures the difference between the soft targets produced by the teacher model and the outputs of the student model, encouraging the student to mimic the teacher’s predictions. Meanwhile, the classification loss ensures that the student model correctly predicts the true labels based on the original data. Together, these two components help the student model retain the knowledge of the teacher while adhering to the ground truth labels.

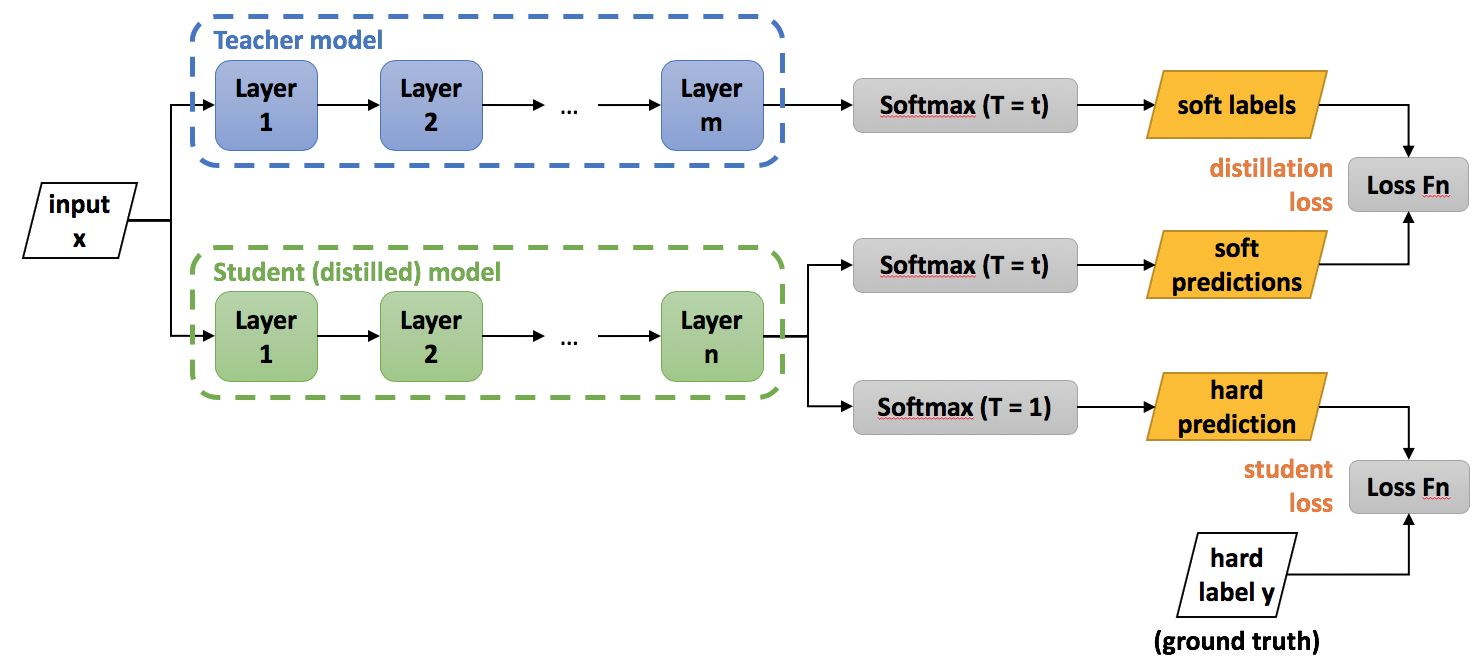

These components, when adeptly configured and harmonized, enable the student model to assimilate the teacher model’s knowledge, crafting a pathway towards efficient and robust smaller models that retain the predictive prowess of their larger counterparts. Figure 9.9 visualizes the training procedure of knowledge distillation. Note how the logits or soft labels of the teacher model are used to provide a distillation loss for the student model to learn from.

Challenges

However, KD has a unique set of challenges and considerations that researchers and practitioners must attentively address. One of the challenges is in the meticulous tuning of hyperparameters, such as the temperature parameter in the softmax function and the weighting between the distillation and classification loss in the objective function. Striking a balance that effectively leverages the softened outputs of the teacher model while maintaining fidelity to the true data labels is non-trivial and can significantly impact the student model’s performance and generalization capabilities.

Furthermore, the architecture of the student model itself poses a considerable challenge. Designing a model that is compact to meet computational and memory constraints, while still being capable of assimilating the essential knowledge from the teacher model, demands a nuanced understanding of model capacity and the inherent trade-offs involved in compression. The student model must be carefully architected to navigate the dichotomy of size and performance, ensuring that the distilled knowledge is meaningfully captured and utilized. Moreover, the choice of teacher model, which inherently influences the quality and nature of the knowledge to be transferred, is important and it introduces an added layer of complexity to the KD process.

These challenges underscore the necessity for a thorough and nuanced approach to implementing KD, ensuring that the resultant student models are both efficient and effective in their operational contexts.

Low-rank Matrix Factorization

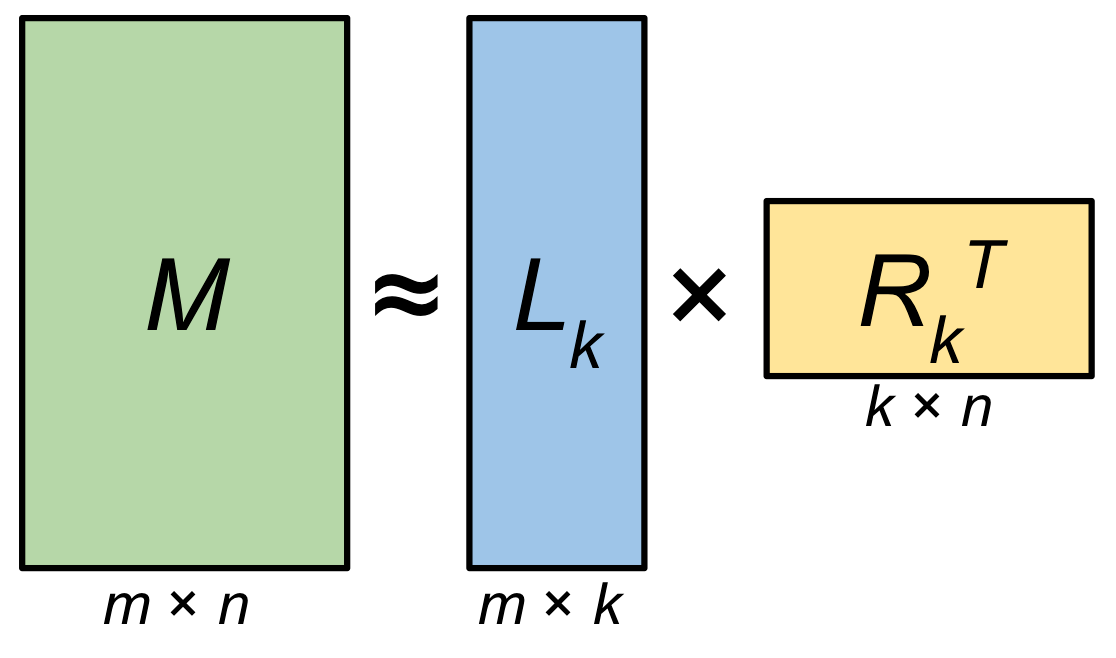

Similar in approximation theme, low-rank matrix factorization (LRMF) is a mathematical technique used in linear algebra and data analysis to approximate a given matrix by decomposing it into two or more lower-dimensional matrices. The fundamental idea is to express a high-dimensional matrix as a product of lower-rank matrices, which can help reduce the complexity of data while preserving its essential structure. Mathematically, given a matrix \(A \in \mathbb{R}^{m \times n}\), LRMF seeks matrices \(U \in \mathbb{R}^{m \times k}\) and \(V \in \mathbb{R}^{k \times n}\) such that \(A \approx UV\), where \(k\) is the rank and is typically much smaller than \(m\) and \(n\).

Background and Benefits

One of the seminal works in the realm of matrix factorization, particularly in the context of recommendation systems, is the paper by Koren, Bell, and Volinsky (2009). The authors look into various factorization models, providing insights into their efficacy in capturing the underlying patterns in the data and enhancing predictive accuracy in collaborative filtering. LRMF has been widely applied in recommendation systems (such as Netflix, Facebook, etc.), where the user-item interaction matrix is factorized to capture latent factors corresponding to user preferences and item attributes.

The main advantage of low-rank matrix factorization lies in its ability to reduce data dimensionality as shown in Figure 9.10, where there are fewer parameters to store, making it computationally more efficient and reducing storage requirements at the cost of some additional compute. This can lead to faster computations and more compact data representations, which is especially valuable when dealing with large datasets. Additionally, it may aid in noise reduction and can reveal underlying patterns and relationships in the data.

Figure 9.10 illustrates the decrease in parameterization enabled by low-rank matrix factorization. Observe how the matrix \(M\) can be approximated by the product of matrices \(L_k\) and \(R_k^T\). For intuition, most fully connected layers in networks are stored as a projection matrix \(M\), which requires \(m \times n\) parameter to be loaded on computation. However, by decomposing and approximating it as the product of two lower rank matrices, we thus only need to store \(m \times k + k\times n\) parameters in terms of storage while incurring an additional compute cost of the matrix multiplication. So long as \(k < n/2\), this factorization has fewer parameters total to store while adding a computation of runtime \(O(mkn)\) (Gu 2023).

Challenges

But practitioners and researchers encounter a spectrum of challenges and considerations that necessitate careful attention and strategic approaches. As with any lossy compression technique, we may lose information during this approximation process: choosing the correct rank that balances the information lost and the computational costs is tricky as well and adds an additional hyper-parameter to tune for.

Low-rank matrix factorization is a valuable tool for dimensionality reduction and making compute fit onto edge devices but, like other techniques, needs to be carefully tuned to the model and task at hand. A key challenge resides in managing the computational complexity inherent to LRMF, especially when grappling with high-dimensional and large-scale data. The computational burden, particularly in the context of real-time applications and massive datasets, remains a significant hurdle for effectively using LRMF.

Moreover, the conundrum of choosing the optimal rank \(k\), for the factorization introduces another layer of complexity. The selection of \(k\) inherently involves a trade-off between approximation accuracy and model simplicity, and identifying a rank that adeptly balances these conflicting objectives often demands a combination of domain expertise, empirical validation, and sometimes, heuristic approaches. The challenge is further amplified when the data encompasses noise or when the inherent low-rank structure is not pronounced, making the determination of a suitable \(k\) even more elusive.

Handling missing or sparse data, a common occurrence in applications like recommendation systems, poses another substantial challenge. Traditional matrix factorization techniques, such as Singular Value Decomposition (SVD), are not directly applicable to matrices with missing entries, necessitating the development and application of specialized algorithms that can factorize incomplete matrices while mitigating the risks of overfitting to the observed entries. This often involves incorporating regularization terms or constraining the factorization in specific ways, which in turn introduces additional hyperparameters that need to be judiciously selected.

Furthermore, in scenarios where data evolves or grows over time, developing LRMF models that can adapt to new data without necessitating a complete re-factorization is a critical yet challenging endeavor. Online and incremental matrix factorization algorithms seek to address this by enabling the update of factorized matrices as new data arrives, yet ensuring stability, accuracy, and computational efficiency in these dynamic settings remains an intricate task. This is particularly challenging in the space of TinyML, where edge redeployment for refreshed models can be quite challenging.

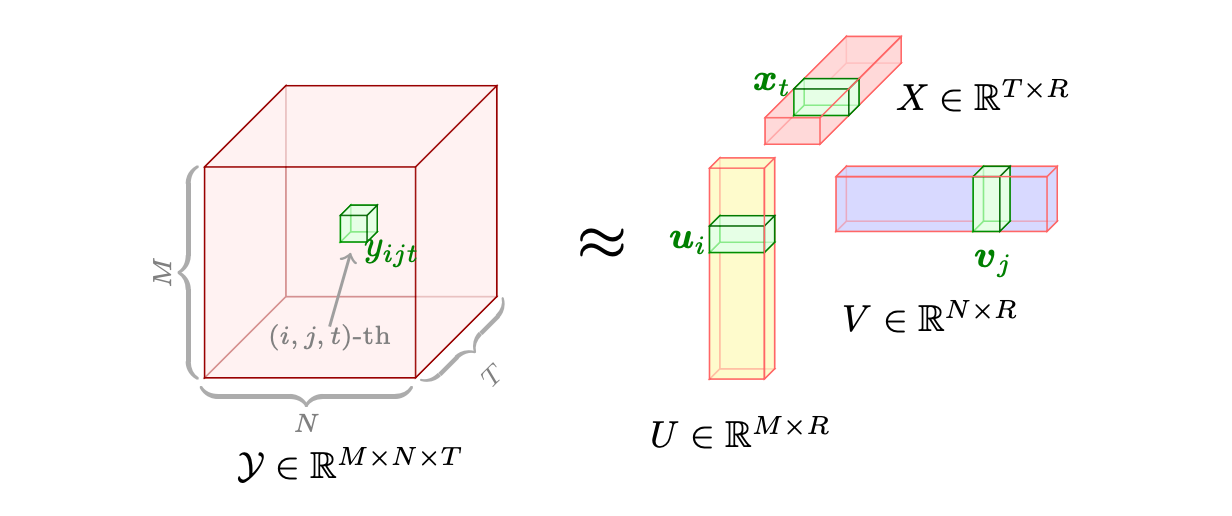

Tensor Decomposition

You have learned in Section 6.4.1 that tensors are flexible structures, commonly used by ML Frameworks, that can represent data in higher dimensions. Similar to low-rank matrix factorization, more complex models may store weights in higher dimensions, such as tensors. Tensor decomposition is the higher-dimensional analogue of matrix factorization, where a model tensor is decomposed into lower rank components (see Figure 9.11). These lower-rank components are easier to compute on and store but may suffer from the same issues mentioned above, such as information loss and the need for nuanced hyperparameter tuning. Mathematically, given a tensor \(\mathcal{A}\), tensor decomposition seeks to represent \(\mathcal{A}\) as a combination of simpler tensors, facilitating a compressed representation that approximates the original data while minimizing the loss of information.

The work of Tamara G. Kolda and Brett W. Bader, “Tensor Decompositions and Applications” (2009), stands out as a seminal paper in the field of tensor decompositions. The authors provide a comprehensive overview of various tensor decomposition methods, exploring their mathematical underpinnings, algorithms, and a wide array of applications, ranging from signal processing to data mining. Of course, the reason we are discussing it is because it has huge potential for system performance improvements, particularly in the space of TinyML, where throughput and memory footprint savings are crucial to feasibility of deployments.

This Colab dives into a technique for compressing models while maintaining high accuracy. The key idea is to train a model with an extra penalty term that encourages the model to be more compressible. Then, the model is encoded using a special coding scheme that aligns with this penalty. This approach allows you to achieve compressed models that perform just as well as the original models and is useful in deploying models to devices with limited resources like mobile phones and edge devices.

9.2.3 Edge-Aware Model Design

Now, we reach the other end of the hardware-software gradient, where we specifically make model architecture decisions directly given knowledge of the edge devices we wish to deploy on.

As covered in previous sections, edge devices are constrained specifically with limitations on memory and parallelizable computations: as such, if there are critical inference speed requirements, computations must be flexible enough to satisfy hardware constraints, something that can be designed at the model architecture level. Furthermore, trying to cram SOTA large ML models onto edge devices even after pruning and compression is generally infeasible purely due to size: the model complexity itself must be chosen with more nuance as to more feasibly fit the device. Edge ML developers have approached this architectural challenge both through designing bespoke edge ML model architectures and through device-aware neural architecture search (NAS), which can more systematically generate feasible on-device model architectures.

Model Design Techniques

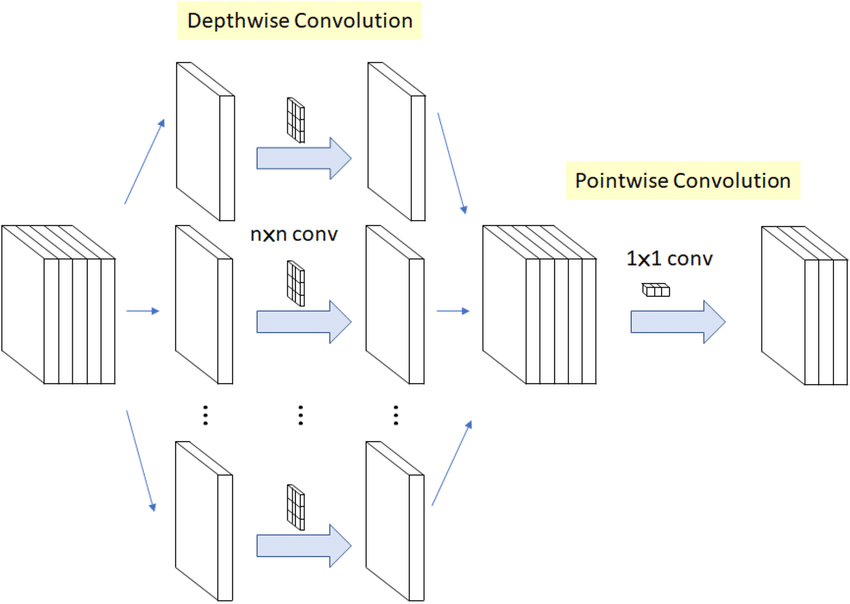

One edge friendly architecture design, commonly used in deep learning for image processing, is depthwise separable convolutions. It consists of two distinct steps: the first is the depthwise convolution, where each input channel is convolved independently with its own set of learnable filters, as shown in Figure 9.12. This step reduces computational complexity by a significant margin compared to standard convolutions, as it drastically reduces the number of parameters and computations involved. The second step is the pointwise convolution, which combines the output of the depthwise convolution channels through a 1x1 convolution, creating inter-channel interactions. This approach offers several advantages. Benefits include reduced model size, faster inference times, and often better generalization due to fewer parameters, making it suitable for mobile and embedded applications. However, depthwise separable convolutions may not capture complex spatial interactions as effectively as standard convolutions and might require more depth (layers) to achieve the same level of representational power, potentially leading to longer training times. Nonetheless, their efficiency in terms of parameters and computation makes them a popular choice in modern convolutional neural network architectures.

Example Model Architectures

In this vein, a number of recent architectures have been, from inception, specifically designed for maximizing accuracy on an edge deployment, notably SqueezeNet, MobileNet, and EfficientNet.

SqueezeNet by Iandola et al. (2016) for instance, utilizes a compact architecture with 1x1 convolutions and fire modules to minimize the number of parameters while maintaining strong accuracy.

MobileNet by Howard et al. (2017), on the other hand, employs the aforementioned depthwise separable convolutions to reduce both computation and model size.

EfficientNet by Tan and Le (2023) takes a different approach by optimizing network scaling (i.e. varying the depth, width and resolution of a network) and compound scaling, a more nuanced variation network scaling, to achieve superior performance with fewer parameters.

These models are essential in the context of edge computing where limited processing power and memory require lightweight yet effective models that can efficiently perform tasks such as image recognition, object detection, and more. Their design principles showcase the importance of intentionally tailored model architecture for edge computing, where performance and efficiency must fit within constraints.

Streamlining Model Architecture Search

Lastly, to address the challenge of finding efficient model architectures that are compatible with edge devices, researchers have developed systematized pipelines that streamline the search for performant designs. Two notable frameworks in this space are TinyNAS by J. Lin et al. (2020) and MorphNet by Gordon et al. (2018), which automate the process of optimizing neural network architectures for edge deployment.

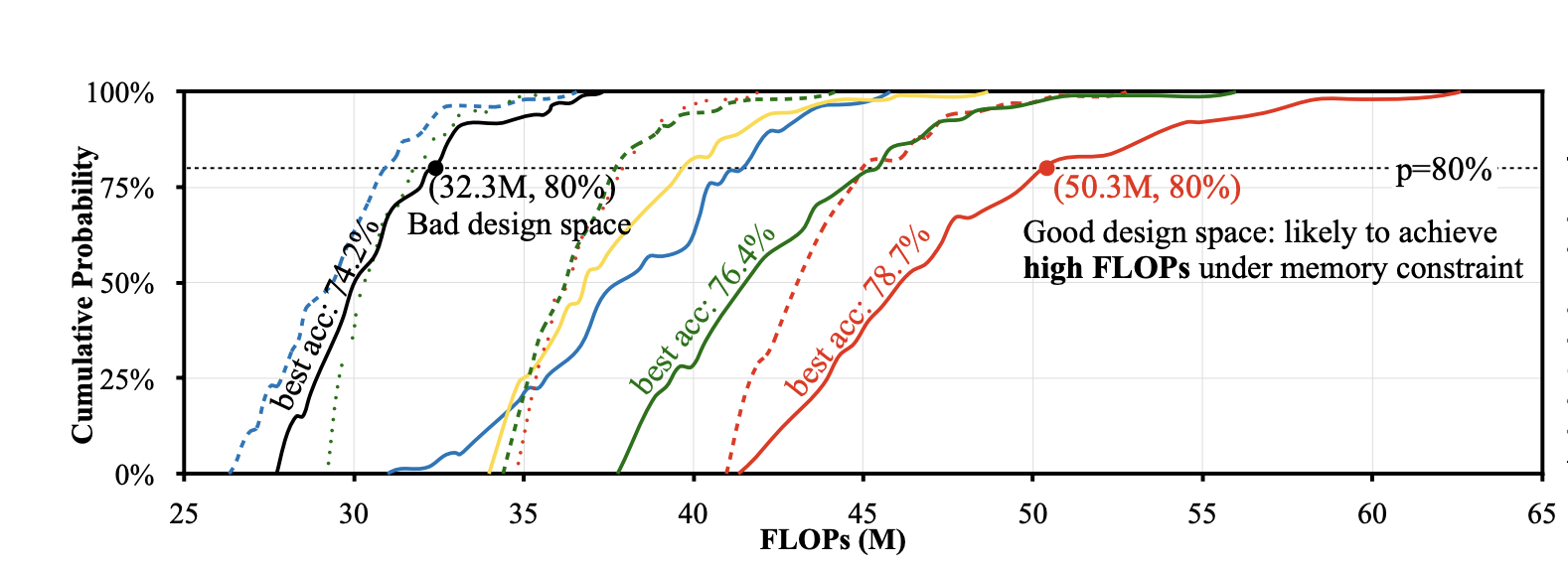

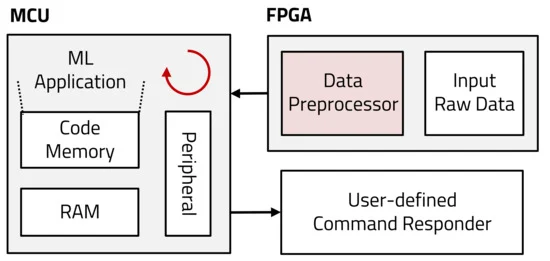

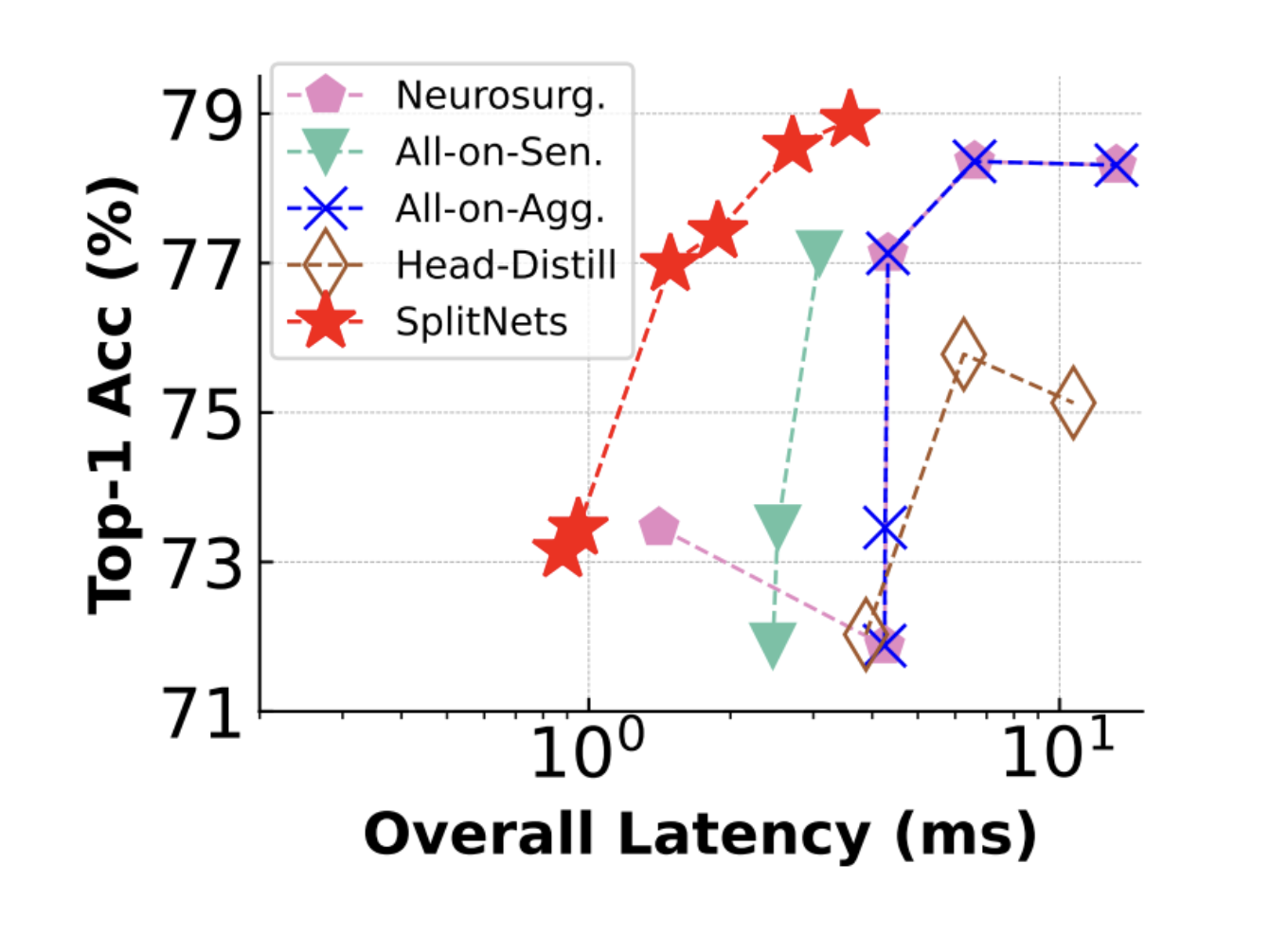

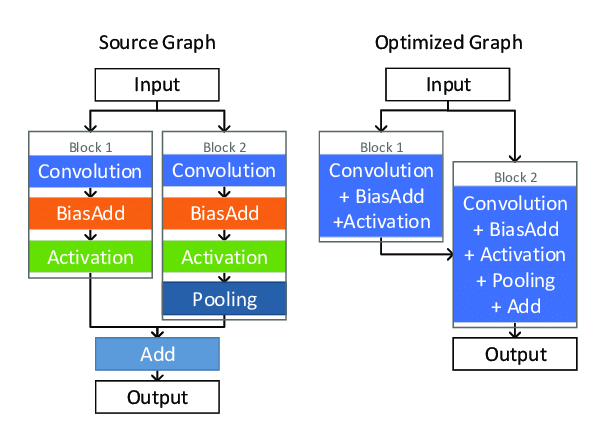

TinyNAS is an innovative neural architecture search framework introduced in the MCUNet paper, designed to efficiently discover lightweight neural network architectures for edge devices with limited computational resources. Leveraging reinforcement learning and a compact search space of micro neural modules, TinyNAS optimizes for both accuracy and latency, enabling the deployment of deep learning models on microcontrollers, IoT devices, and other resource-constrained platforms. Specifically, TinyNAS, in conjunction with a network optimizer TinyEngine, generates different search spaces by scaling the input resolution and the model width of a model, then collects the computation FLOPs distribution of satisfying networks within the search space to evaluate its priority. TinyNAS relies on the assumption that a search space that accommodates higher FLOPs under memory constraint can produce higher accuracy models, something that the authors verified in practice in their work. In empirical performance, TinyEngine reduced the peak memory usage of models by around 3.4 times and accelerated inference by 1.7 to 3.3 times compared to TFLite and CMSIS-NN.

Similarly, MorphNet is a neural network optimization framework designed to automatically reshape and morph the architecture of deep neural networks, optimizing them for specific deployment requirements. It achieves this through two steps: first, it leverages a set of customizable network morphing operations, such as widening or deepening layers, to dynamically adjust the network’s structure. These operations enable the network to adapt to various computational constraints, including model size, latency, and accuracy targets, which are extremely prevalent in edge computing usage. In the second step, MorphNet uses a reinforcement learning-based approach to search for the optimal permutation of morphing operations, effectively balancing the trade-off between model size and performance. This innovative method allows deep learning practitioners to automatically tailor neural network architectures to specific application and hardware requirements, ensuring efficient and effective deployment across various platforms.

TinyNAS and MorphNet represent a few of the many significant advancements in the field of systematic neural network optimization, allowing architectures to be systematically chosen and generated to fit perfectly within problem constraints.

Imagine you’re building a tiny robot that can identify different flowers. It needs to be smart, but also small and energy-efficient! In the “Edge-Aware Model Design” world, we learned about techniques like depthwise separable convolutions and architectures like SqueezeNet, MobileNet, and EfficientNet – all designed to pack intelligence into compact models. Now, let’s see these ideas in action with some xColabs:

SqueezeNet in Action: Maybe you’d like a Colab showing how to train a SqueezeNet model on a flower image dataset. This would demonstrate its small size and how it learns to recognize patterns despite its efficiency.

MobileNet Exploration: Ever wonder if those tiny image models are just as good as the big ones? Let’s find out! In this Colab, we’re pitting MobileNet, the lightweight champion, against a classic image classification model. We’ll race them for speed, measure their memory needs, and see who comes out on top for accuracy. Get ready for a battle of the image brains!

9.3 Efficient Numerics Representation

Numerics representation involves a myriad of considerations, including, but not limited to, the precision of numbers, their encoding formats, and the arithmetic operations facilitated. It invariably involves a rich array of different trade-offs, where practitioners are tasked with navigating between numerical accuracy and computational efficiency. For instance, while lower-precision numerics may offer the allure of reduced memory usage and expedited computations, they concurrently present challenges pertaining to numerical stability and potential degradation of model accuracy.

Motivation

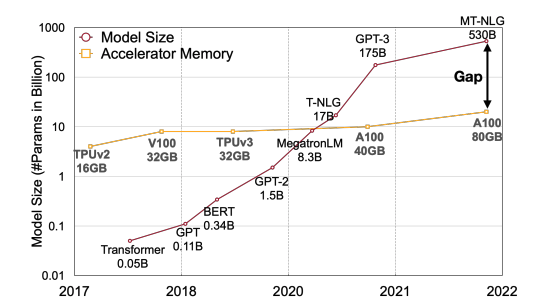

The imperative for efficient numerics representation arises, particularly as efficient model optimization alone falls short when adapting models for deployment on low-powered edge devices operating under stringent constraints.

Beyond minimizing memory demands, the tremendous potential of efficient numerics representation lies in, but is not limited to, these fundamental ways. By diminishing computational intensity, efficient numerics can thereby amplify computational speed, allowing more complex models to compute on low-powered devices. Reducing the bit precision of weights and activations on heavily over-parameterized models enables condensation of model size for edge devices without significantly harming the model’s predictive accuracy. With the omnipresence of neural networks in models, efficient numerics has a unique advantage in leveraging the layered structure of NNs to vary numeric precision across layers, minimizing precision in resistant layers while preserving higher precision in sensitive layers.

In this section, we will dive into how practitioners can harness the principles of hardware-software co-design at the lowest levels of a model to facilitate compatibility with edge devices. Kicking off with an introduction to the numerics, we will examine its implications for device memory and computational complexity. Subsequently, we will embark on a discussion regarding the trade-offs entailed in adopting this strategy, followed by a deep dive into a paramount method of efficient numerics: quantization.

9.3.1 The Basics

Types

Numerical data, the bedrock upon which machine learning models stand, manifest in two primary forms. These are integers and floating point numbers.

Integers: Whole numbers, devoid of fractional components, integers (e.g., -3, 0, 42) are key in scenarios demanding discrete values. For instance, in ML, class labels in a classification task might be represented as integers, where “cat”, “dog”, and “bird” could be encoded as 0, 1, and 2 respectively.

Floating-Point Numbers: Encompassing real numbers, floating-point numbers (e.g., -3.14, 0.01, 2.71828) afford the representation of values with fractional components. In ML model parameters, weights might be initialized with small floating-point values, such as 0.001 or -0.045, to commence the training process. Currently, there are 4 popular precision formats discussed below.

Variable bit widths: Beyond the standard widths, research is ongoing into extremely low bit-width numerics, even down to binary or ternary representations. Extremely low bit-width operations can offer significant speedups and reduce power consumption even further. While challenges remain in maintaining model accuracy with such drastic quantization, advances continue to be made in this area.

Precision

Precision, delineating the exactness with which a number is represented, bifurcates typically into single, double, half and in recent years there have been a number of other precisions that have emerged to better support machine learning tasks efficiently on the underlying hardware.

Double Precision (Float64): Allocating 64 bits, double precision (e.g., 3.141592653589793) provides heightened accuracy, albeit demanding augmented memory and computational resources. In scientific computations, where precision is paramount, variables like π might be represented with Float64.

Single Precision (Float32): With 32 bits at its disposal, single precision (e.g., 3.1415927) strikes a balance between numerical accuracy and memory conservation. In ML, Float32 might be employed to store weights during training to maintain a reasonable level of precision.

Half Precision (Float16): Constrained to 16 bits, half precision (e.g., 3.14) curtails memory usage and can expedite computations, albeit sacrificing numerical accuracy and range. In ML, especially during inference on resource-constrained devices, Float16 might be utilized to reduce the model’s memory footprint.

Bfloat16: Brain Floating-Point Format or Bfloat16, also employs 16 bits but allocates them differently compared to FP16: 1 bit for the sign, 8 bits for the exponent (resulting in the same number range as in float32), and 7 bits for the fraction. This format, developed by Google, prioritizes a larger exponent range over precision, making it particularly useful in deep learning applications where the dynamic range is crucial.

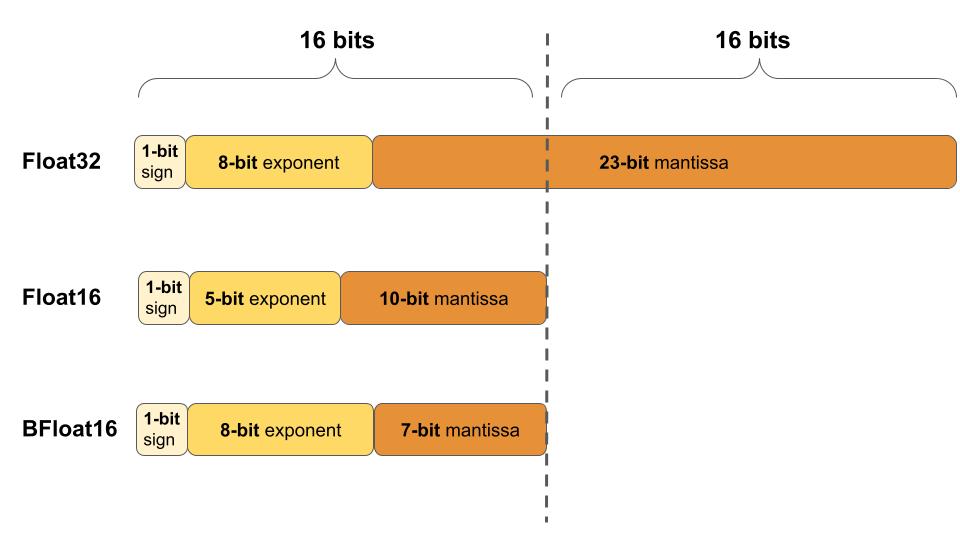

Figure 9.13 illustrates the differences between the three floating-point formats: Float32, Float16, and BFloat16.

Integer: Integer representations are made using 8, 4, and 2 bits. They are often used during the inference phase of neural networks, where the weights and activations of the model are quantized to these lower precisions. Integer representations are deterministic and offer significant speed and memory advantages over floating-point representations. For many inference tasks, especially on edge devices, the slight loss in accuracy due to quantization is often acceptable given the efficiency gains. An extreme form of integer numerics is for binary neural networks (BNNs), where weights and activations are constrained to one of two values: either +1 or -1.

You may refer back to Section 8.6.1 for a table comparison between the trade-offs of different numeric types.

Numeric Encoding and Storage

Numeric encoding, the art of transmuting numbers into a computer-amenable format, and their subsequent storage are critical for computational efficiency. For instance, floating-point numbers might be encoded using the IEEE 754 standard, which apportions bits among sign, exponent, and fraction components, thereby enabling the representation of a vast array of values with a single format. There are a few new IEEE floating point formats that have been defined specifically for AI workloads:

- bfloat16- A 16-bit floating point format introduced by Google. It has 8 bits for exponent, 7 bits for mantissa and 1 bit for sign. Offers a reduced precision compromise between 32-bit float and 8-bit integers. Supported on many hardware accelerators.

- posit - A configurable format that can represent different levels of precision based on exponent bits. It is more efficient than IEEE 754 binary floats. Has adjustable dynamic range and precision.

- Flexpoint - A format introduced by Intel that can dynamically adjust precision across layers or within a layer. Allows tuning precision to accuracy and hardware requirements.

- BF16ALT - A proposed 16-bit format by ARM as an alternative to bfloat16. Uses additional bit in exponent to prevent overflow/underflow.

- TF32 - Introduced by Nvidia for Ampere GPUs. Uses 10 bits for exponent instead of 8 bits like FP32. Improves model training performance while maintaining accuracy.

- FP8 - 8-bit floating point format that keeps 6 bits for mantissa and 2 bits for exponent. Enables better dynamic range than integers.

The key goals of these new formats are to provide lower precision alternatives to 32-bit floats for better computational efficiency and performance on AI accelerators while maintaining model accuracy. They offer different tradeoffs in terms of precision, range and implementation cost/complexity.

9.3.2 Efficiency Benefits

As you learned in Section 8.6.2, numerical efficiency matters for machine learning workloads for a number of reasons. Efficient numerics is not just about reducing the bit-width of numbers but understanding the trade-offs between accuracy and efficiency. As machine learning models become more pervasive, especially in real-world, resource-constrained environments, the focus on efficient numerics will continue to grow. By thoughtfully selecting and leveraging the appropriate numeric precision, one can achieve robust model performance while optimizing for speed, memory, and energy.

9.3.3 Numeric Representation Nuances

There are a number of nuances with numerical representations for ML that require us to have an understanding of both the theoretical and practical aspects of numerics representation, as well as a keen awareness of the specific requirements and constraints of the application domain.

Memory Usage

The memory footprint of ML models, particularly those of considerable complexity and depth, can be substantial, thereby posing a significant challenge in both training and deployment phases. For instance, a deep neural network with 100 million parameters, represented using Float32 (32 bits or 4 bytes per parameter), would necessitate approximately 400 MB of memory just for storing the model weights. This does not account for additional memory requirements during training for storing gradients, optimizer states, and forward pass caches, which can further amplify the memory usage, potentially straining the resources on certain hardware, especially edge devices with limited memory capacity.

The choice of numeric representation further impacts memory usage and computational efficiency. For example, using Float64 for model weights would double the memory requirements compared to Float32, and could potentially increase computational time as well. For a weight matrix with dimensions [1000, 1000], Float64 would consume approximately 8MB of memory, while Float32 would reduce this to about 4MB. Thus, selecting an appropriate numeric format is crucial for optimizing both memory and computational efficiency.

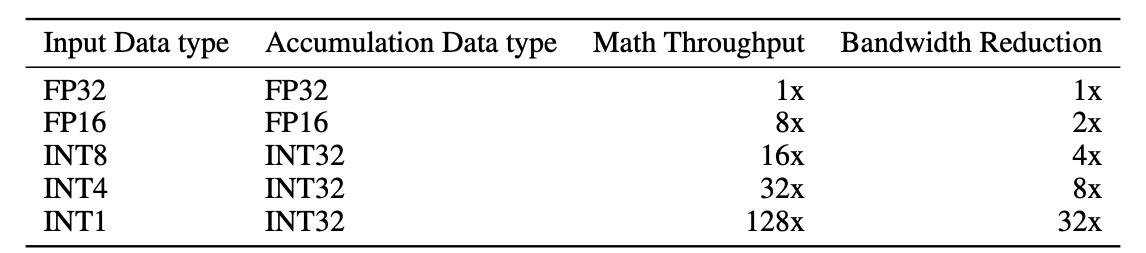

Computational Complexity

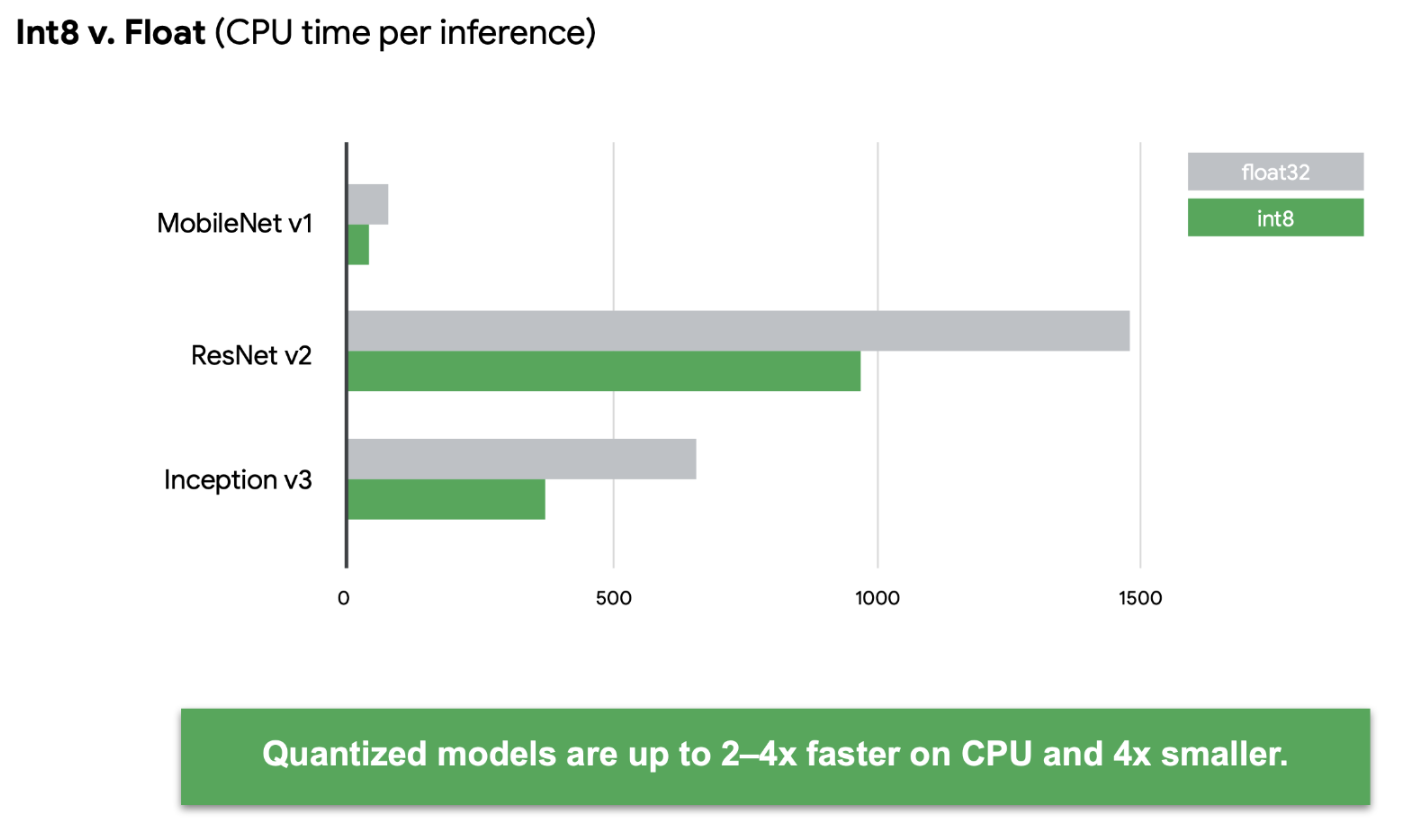

Numerical precision directly impacts computational complexity, influencing the time and resources required to perform arithmetic operations. For example, operations using Float64 generally consume more computational resources than their Float32 or Float16 counterparts (see Figure 9.14). In the realm of ML, where models might need to process millions of operations (e.g., multiplications and additions in matrix operations during forward and backward passes), even minor differences in the computational complexity per operation can aggregate into a substantial impact on training and inference times. As shown in Figure 9.15, quantized models can be many times faster than their unquantized versions.

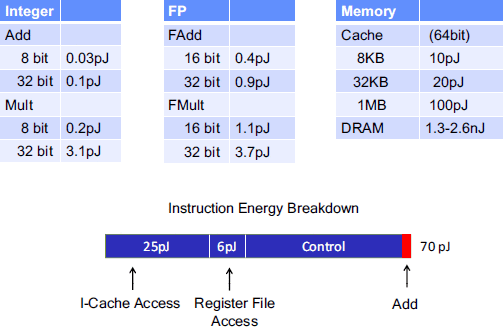

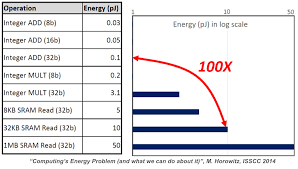

In addition to pure runtimes, there is also a concern over energy efficiency. Not all numerical computations are created equal from the underlying hardware standpoint. Some numerical operations are more energy efficient than others. For example, Figure 9.16 below shows that integer addition is much more energy efficient than integer multiplication.

Hardware Compatibility

Ensuring compatibility and optimized performance across diverse hardware platforms is another challenge in numerics representation. Different hardware, such as CPUs, GPUs, TPUs, and FPGAs, have varying capabilities and optimizations for handling different numeric precisions. For example, certain GPUs might be optimized for Float32 computations, while others might provide accelerations for Float16. Developing and optimizing ML models that can leverage the specific numerical capabilities of different hardware, while ensuring that the model maintains its accuracy and robustness, requires careful consideration and potentially additional development and testing efforts.

Precision and Accuracy Trade-offs

The trade-off between numerical precision and model accuracy is a nuanced challenge in numerics representation. Utilizing lower-precision numerics, such as Float16, might conserve memory and expedite computations but can also introduce issues like quantization error and reduced numerical range. For instance, training a model with Float16 might introduce challenges in representing very small gradient values, potentially impacting the convergence and stability of the training process. Furthermore, in certain applications, such as scientific simulations or financial computations, where high precision is paramount, the use of lower-precision numerics might not be permissible due to the risk of accruing significant errors.

Trade-off Examples

To understand and appreciate the nuances, let’s consider some use case examples. Through these we will realize that the choice of numeric representation is not merely a technical decision but a strategic one, influencing the model’s predictive acumen, its computational demands, and its deployability across diverse computational environments. In this section we will look at a couple of examples to better understand the trade-offs with numerics and how they tie to the real world.

Autonomous Vehicles

In the domain of autonomous vehicles, ML models are employed to interpret sensor data and make real-time decisions. The models must process high-dimensional data from various sensors (e.g., LiDAR, cameras, radar) and execute numerous computations within a constrained time frame to ensure safe and responsive vehicle operation. So the trade-offs here would include:

- Memory Usage: Storing and processing high-resolution sensor data, especially in floating-point formats, can consume substantial memory.

- Computational Complexity: Real-time processing demands efficient computations, where higher-precision numerics might impede the timely execution of control actions.

Mobile Health Applications

Mobile health applications often use ML models for tasks like activity recognition, health monitoring, or predictive analytics, operating within the resource-constrained environment of mobile devices. The trade-offs here would include:

- Precision and Accuracy Trade-offs: Employing lower-precision numerics to conserve resources might impact the accuracy of health predictions or anomaly detections, which could have significant implications for user health and safety.

- Hardware Compatibility: Models need to be optimized for diverse mobile hardware, ensuring efficient operation across a wide range of devices with varying numerical computation capabilities.

High-Frequency Trading (HFT) Systems

HFT systems leverage ML models to make rapid trading decisions based on real-time market data. These systems demand extremely low-latency responses to capitalize on short-lived trading opportunities.

- Computational Complexity: The models must process and analyze vast streams of market data with minimal latency, where even slight delays, potentially introduced by higher-precision numerics, can result in missed opportunities.

- Precision and Accuracy Trade-offs: Financial computations often demand high numerical precision to ensure accurate pricing and risk assessments, posing challenges in balancing computational efficiency and numerical accuracy.

Edge-Based Surveillance Systems

Surveillance systems deployed on edge devices, like security cameras, use ML models for tasks like object detection, activity recognition, and anomaly detection, often operating under stringent resource constraints.

- Memory Usage: Storing pre-trained models and processing video feeds in real-time demands efficient memory usage, which can be challenging with high-precision numerics.

- Hardware Compatibility: Ensuring that models can operate efficiently on edge devices with varying hardware capabilities and optimizations for different numeric precisions is crucial for widespread deployment.

Scientific Simulations

ML models are increasingly being utilized in scientific simulations, such as climate modeling or molecular dynamics simulations, to improve predictive capabilities and reduce computational demands.

- Precision and Accuracy Trade-offs: Scientific simulations often require high numerical precision to ensure accurate and reliable results, which can conflict with the desire to reduce computational demands via lower-precision numerics.

- Computational Complexity: The models must manage and process complex, high-dimensional simulation data efficiently to ensure timely results and enable large-scale or long-duration simulations.

These examples illustrate diverse scenarios where the challenges of numerics representation in ML models are prominently manifested. Each system presents a unique set of requirements and constraints, necessitating tailored strategies and solutions to navigate the challenges of memory usage, computational complexity, precision-accuracy trade-offs, and hardware compatibility.

9.3.4 Quantization

Quantization is prevalent in various scientific and technological domains, and it essentially involves the mapping or constraining of a continuous set or range into a discrete counterpart to minimize the number of bits required.

Initial Breakdown

We begin our foray into quantization with a brief analysis of one important use for quantization.

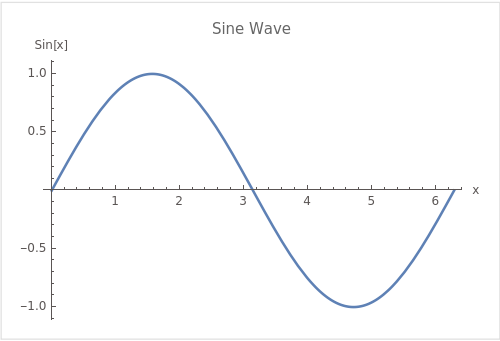

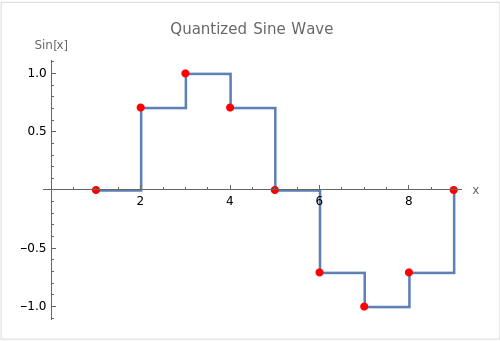

In signal processing, the continuous sine wave (shown in Figure 9.17) can be quantized into discrete values through a process known as sampling. This is a fundamental concept in digital signal processing and is crucial for converting analog signals (like the continuous sine wave) into a digital form that can be processed by computers. The sine wave is a prevalent example due to its periodic and smooth nature, making it a useful tool for explaining concepts like frequency, amplitude, phase, and, of course, quantization.

In the quantized version shown in Figure 9.18, the continuous sine wave (Figure 9.17) is sampled at regular intervals (in this case, every \(\frac{\pi}{4}\) radians), and only these sampled values are represented in the digital version of the signal. The step-wise lines between the points show one way to represent the quantized signal in a piecewise-constant form. This is a simplified example of how analog-to-digital conversion works, where a continuous signal is mapped to a discrete set of values, enabling it to be represented and processed digitally.

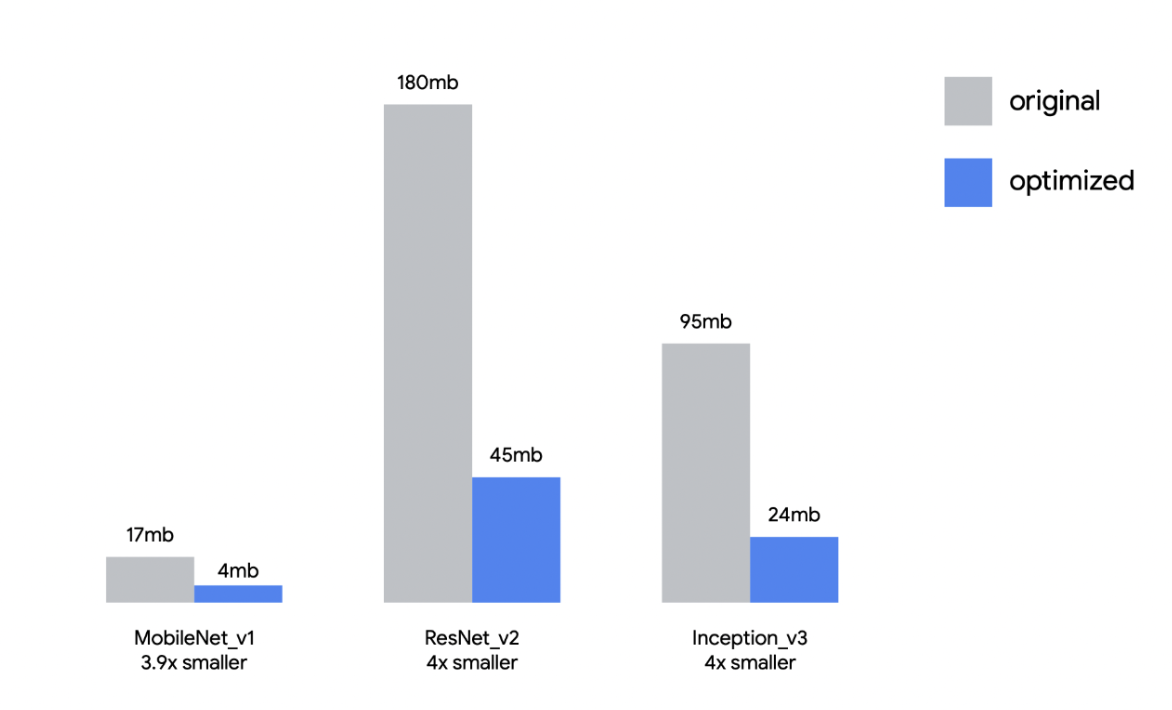

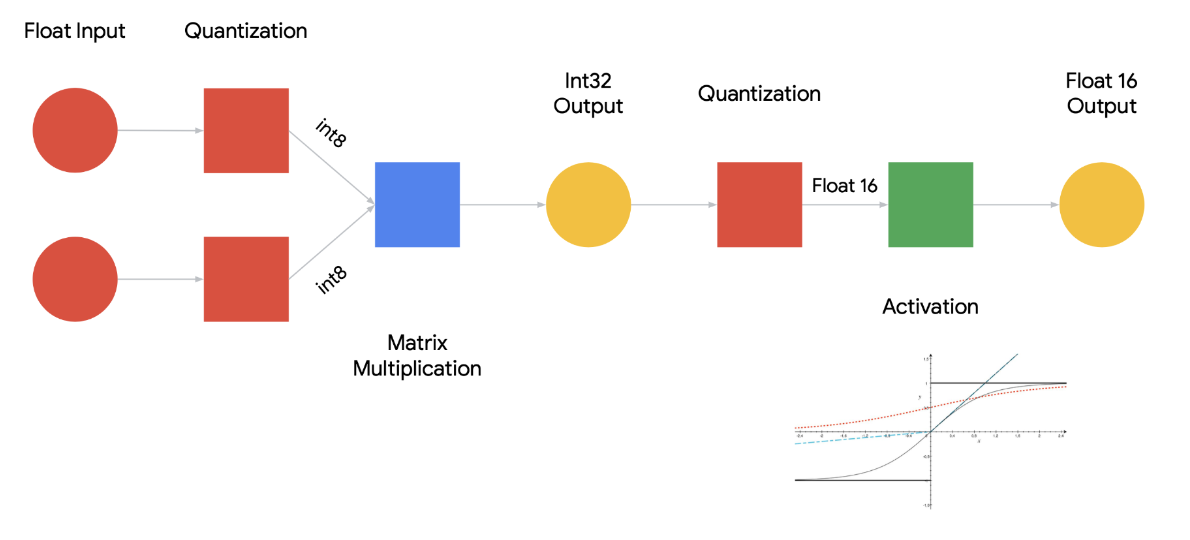

Returning to the context of Machine Learning (ML), quantization refers to the process of constraining the possible values that numerical parameters (such as weights and biases) can take to a discrete set, thereby reducing the precision of the parameters and consequently, the model’s memory footprint. When properly implemented, quantization can reduce model size by up to 4x and improve inference latency and throughput by up to 2-3x. Figure 9.19 illustrates the impact that quantization has on different models’ sizes: for example, an Image Classification model like ResNet-v2 can be compressed from 180MB down to 45MB with 8-bit quantization. There is typically less than 1% loss in model accuracy from well tuned quantization. Accuracy can often be recovered by re-training the quantized model with quantization-aware training techniques. Therefore, this technique has emerged to be very important in deploying ML models to resource-constrained environments, such as mobile devices, IoT devices, and edge computing platforms, where computational resources (memory and processing power) are limited.

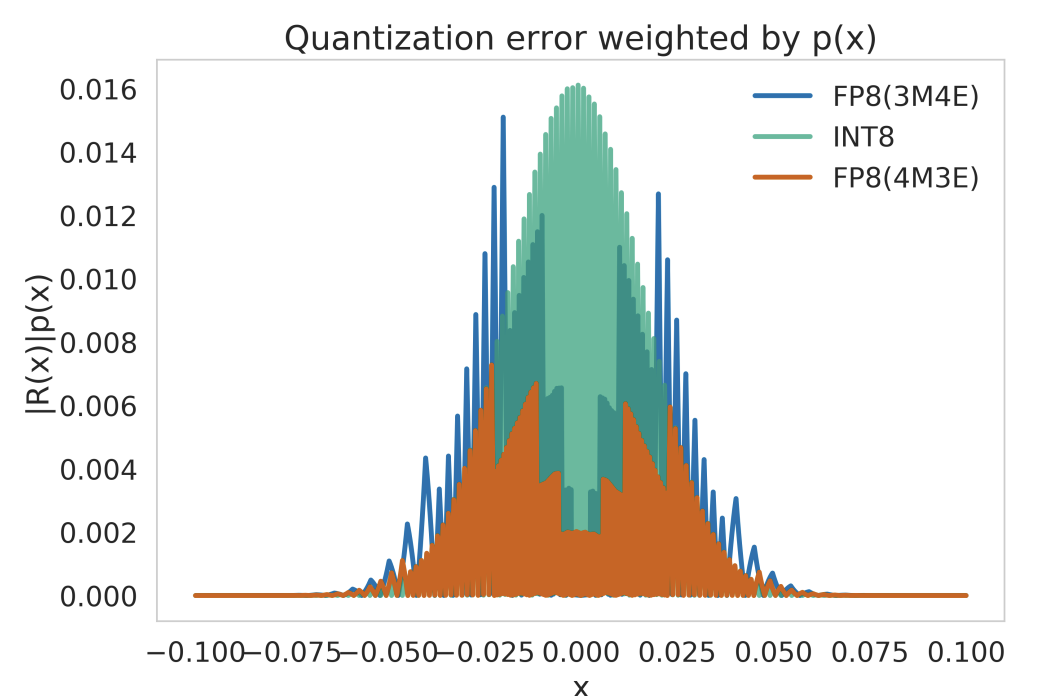

There are several dimensions to quantization such as uniformity, stochasticity (or determinism), symmetry, granularity (across layers/channels/groups or even within channels), range calibration considerations (static vs dynamic), and fine-tuning methods (QAT, PTQ, ZSQ). We examine these below.

9.3.5 Types

Uniform Quantization

Uniform quantization involves mapping continuous or high-precision values to a lower-precision representation using a uniform scale. This means that the interval between each possible quantized value is consistent. For example, if weights of a neural network layer are quantized to 8-bit integers (values between 0 and 255), a weight with a floating-point value of 0.56 might be mapped to an integer value of 143, assuming a linear mapping between the original and quantized scales. Due to its use of integer or fixed-point math pipelines, this form of quantization allows computation on the quantized domain without the need to dequantize beforehand.

The process for implementing uniform quantization starts with choosing a range of real numbers to be quantized. The next step is to select a quantization function and map the real values to the integers representable by the bit-width of the quantized representation. For instance, a popular choice for a quantization function is:

\[ Q(r)=Int(r/S) - Z \]

where \(Q\) is the quantization operator, \(r\) is a real valued input (in our case, an activation or weight), \(S\) is a real valued scaling factor, and \(Z\) is an integer zero point. The Int function maps a real value to an integer value through a rounding operation. Through this function, we have effectively mapped real values \(r\) to some integer values, resulting in quantized levels which are uniformly spaced.

When the need arises for practitioners to retrieve the original higher precision values, real values \(r\) can be recovered from quantized values through an operation known as dequantization. In the example above, this would mean performing the following operation on our quantized value:

\[ \bar{r} = S(Q(r) + Z) \]

As discussed, some precision in the real value is lost by quantization. In this case, the recovered value \(\bar{r}\) will not exactly match \(r\) due to the rounding operation. This is an important tradeoff to note; however, in many successful uses of quantization, the loss of precision can be negligible and the test accuracy remains high. Despite this, uniform quantization continues to be the current de-facto choice due to its simplicity and efficient mapping to hardware.

Non-uniform Quantization

Non-uniform quantization, on the other hand, does not maintain a consistent interval between quantized values. This approach might be used to allocate more possible discrete values in regions where the parameter values are more densely populated, thereby preserving more detail where it is most needed. For instance, in bell-shaped distributions of weights with long tails, a set of weights in a model predominantly lies within a certain range; thus, more quantization levels might be allocated to that range to preserve finer details, enabling us to better capture information. However, one major weakness of non-uniform quantization is that it requires dequantization before higher precision computations due to its non-uniformity, restricting its ability to accelerate computation compared to uniform quantization.

Typically, a rule-based non-uniform quantization uses a logarithmic distribution of exponentially increasing steps and levels as opposed to linearly. Another popular branch lies in binary-code-based quantization where real number vectors are quantized into binary vectors with a scaling factor. Notably, there is no closed form solution for minimizing errors between the real value and non-uniformly quantized value, so most quantizations in this field rely on heuristic solutions. For instance, recent work by Xu et al. (2018) formulates non-uniform quantization as an optimization problem where the quantization steps/levels in quantizer \(Q\) are adjusted to minimize the difference between the original tensor and quantized counterpart.

\[ \min_Q ||Q(r)-r||^2 \]

Furthermore, learnable quantizers can be jointly trained with model parameters, and the quantization steps/levels are generally trained with iterative optimization or gradient descent. Additionally, clustering has been used to alleviate information loss from quantization. While capable of capturing higher levels of detail, non-uniform quantization schemes can be difficult to deploy efficiently on general computation hardware, making it less-preferred to methods which use uniform quantization.

Stochastic Quantization

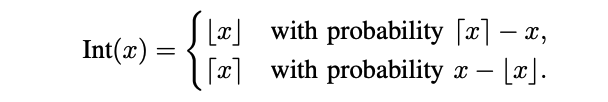

Unlike the two previous approaches which generate deterministic mappings, there is some work exploring the idea of stochastic quantization for quantization-aware training and reduced precision training. This approach maps floating numbers up or down with a probability associated to the magnitude of the weight update. The hope generated by high level intuition is that such a probabilistic approach may allow a neural network to explore more, as compared to deterministic quantization. Supposedly, enabling a stochastic rounding may allow neural networks to escape local optimums, thereby updating its parameters. Below are two example stochastic mapping functions:

Zero Shot Quantization

Zero-shot quantization refers to the process of converting a full-precision deep learning model directly into a low-precision, quantized model without the need for any retraining or fine-tuning on the quantized model. The primary advantage of this approach is its efficiency, as it eliminates the often time-consuming and resource-intensive process of retraining a model post-quantization. By leveraging techniques that anticipate and minimize quantization errors, zero-shot quantization maintains the model’s original accuracy even after reducing its numerical precision. It is particularly useful for Machine Learning as a Service (MLaaS) providers aiming to expedite the deployment of their customer’s workloads without having to access their datasets.

9.3.6 Calibration

Calibration is the process of selecting the most effective clipping range [\(\alpha\), \(\beta\)] for weights and activations to be quantized to. For example, consider quantizing activations that originally have a floating-point range between -6 and 6 to 8-bit integers. If you just take the minimum and maximum possible 8-bit integer values (-128 to 127) as your quantization range, it might not be the most effective. Instead, calibration would involve passing a representative dataset then use this observed range for quantization.

There are many calibration methods but a few commonly used include:

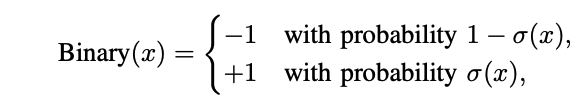

- Max: Use the maximum absolute value seen during calibration. However, this method is susceptible to outlier data. Notice how in Figure 9.22, we have an outlier cluster around 2.1, while the rest are clustered around smaller values.

- Entropy: Use KL divergence to minimize information loss between the original floating-point values and values that could be represented by the quantized format. This is the default method used by TensorRT.

- Percentile: Set the range to a percentile of the distribution of absolute values seen during calibration. For example, 99% calibration would clip 1% of the largest magnitude values.

Importantly, the quality of calibration can make a difference between a quantized model that retains most of its accuracy and one that degrades significantly. Hence, it’s an essential step in the quantization process. When choosing a calibration range, there are two types: symmetric and asymmetric.

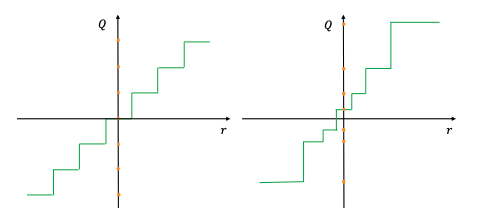

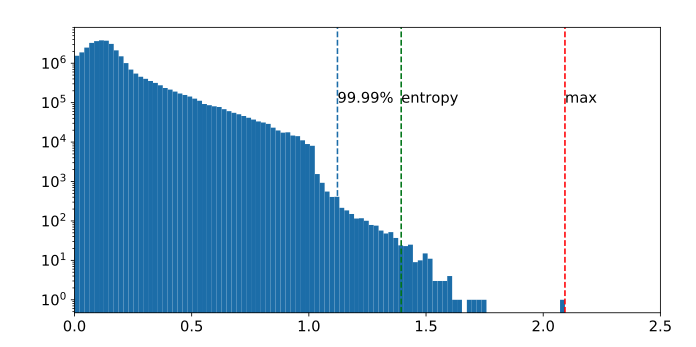

Symmetric Quantization

Symmetric quantization maps real values to a symmetrical clipping range centered around 0. This involves choosing a range [\(\alpha\), \(\beta\)] where \(\alpha = -\beta\). For example, one symmetrical range would be based on the min/max values of the real values such that:

\[ \alpha = \beta = max(abs(r_{max}), abs(r_{min})) \]

Symmetric clipping ranges are the most widely adopted in practice as they have the advantage of easier implementation. In particular, the mapping of zero to zero in the clipping range (sometimes called “zeroing out of the zero point”) can lead to reduction in computational cost during inference (Wu, Judd, and Isaev 2020).

Asymmetric Quantization

Asymmetric quantization maps real values to an asymmetrical clipping range that isn’t necessarily centered around 0, as shown in Figure 9.23 on the right. It involves choosing a range [\(\alpha\), \(\beta\)] where \(\alpha \neq -\beta\). For example, selecting a range based on the minimum and maximum real values, or where \(\alpha = r_{min}\) and \(\beta = r_{max}\), creates an asymmetric range. Typically, asymmetric quantization produces tighter clipping ranges compared to symmetric quantization, which is important when target weights and activations are imbalanced, e.g., the activation after the ReLU always has non-negative values. Despite producing tighter clipping ranges, asymmetric quantization is less preferred to symmetric quantization as it doesn’t always zero out the real value zero.

Granularity

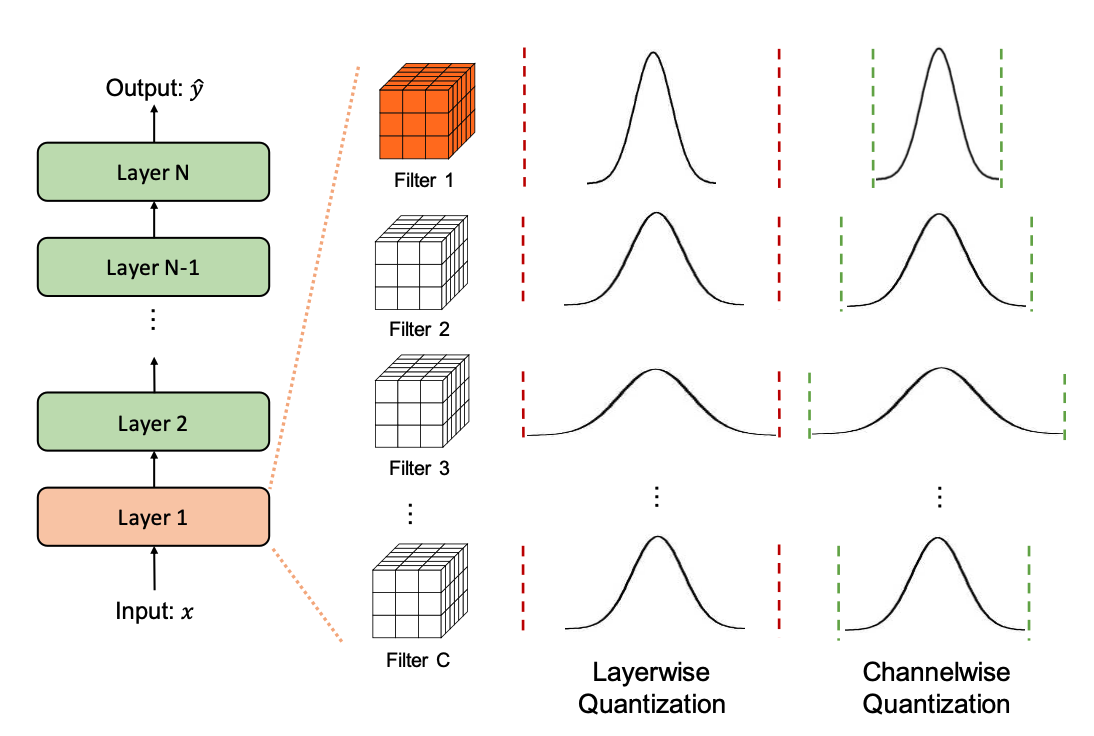

Upon deciding the type of clipping range, it is essential to tighten the range to allow a model to retain as much of its accuracy as possible. We’ll be taking a look at convolutional neural networks as our way of exploring methods that fine tune the granularity of clipping ranges for quantization. The input activation of a layer in our CNN undergoes convolution with multiple convolutional filters. Every convolutional filter can possess a unique range of values. Notice how in Figure 9.24, the range for Filter 1 is much smaller than that for Filter 3. Consequently, one distinguishing feature of quantization approaches is the precision with which the clipping range [α,β] is determined for the weights.

- Layerwise Quantization: This approach determines the clipping range by considering all of the weights in the convolutional filters of a layer. Then, the same clipping range is used for all convolutional filters. It’s the simplest to implement, and, as such, it often results in sub-optimal accuracy due the wide variety of differing ranges between filters. For example, a convolutional kernel with a narrower range of parameters loses its quantization resolution due to another kernel in the same layer having a wider range.

- Groupwise Quantization: This approach groups different channels inside a layer to calculate the clipping range. This method can be helpful when the distribution of parameters across a single convolution/activation varies a lot. In practice, this method was useful in Q-BERT (Shen et al. 2020) for quantizing Transformer (Vaswani et al. 2017) models that consist of fully-connected attention layers. The downside with this approach comes with the extra cost of accounting for different scaling factors.

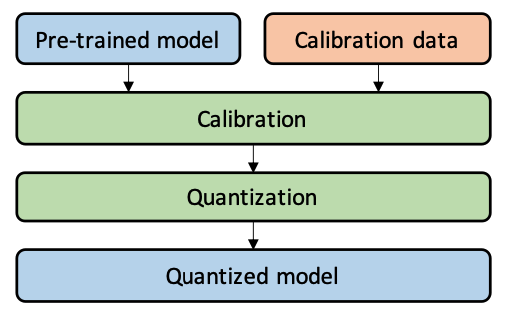

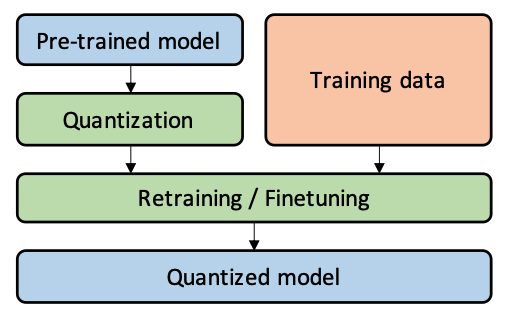

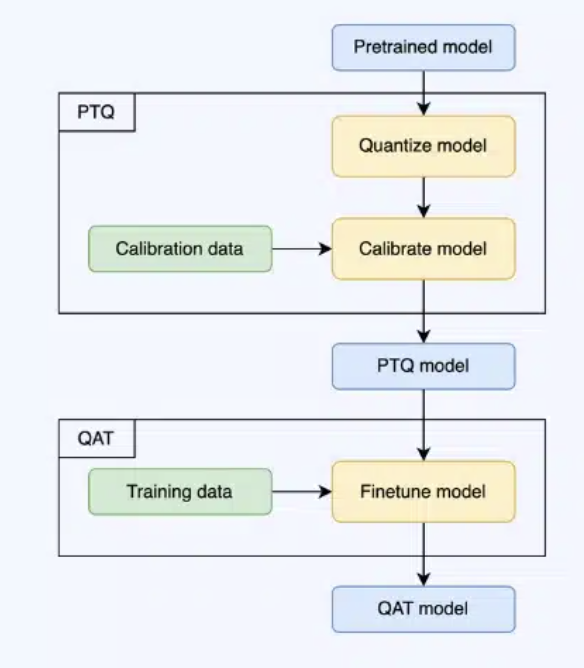

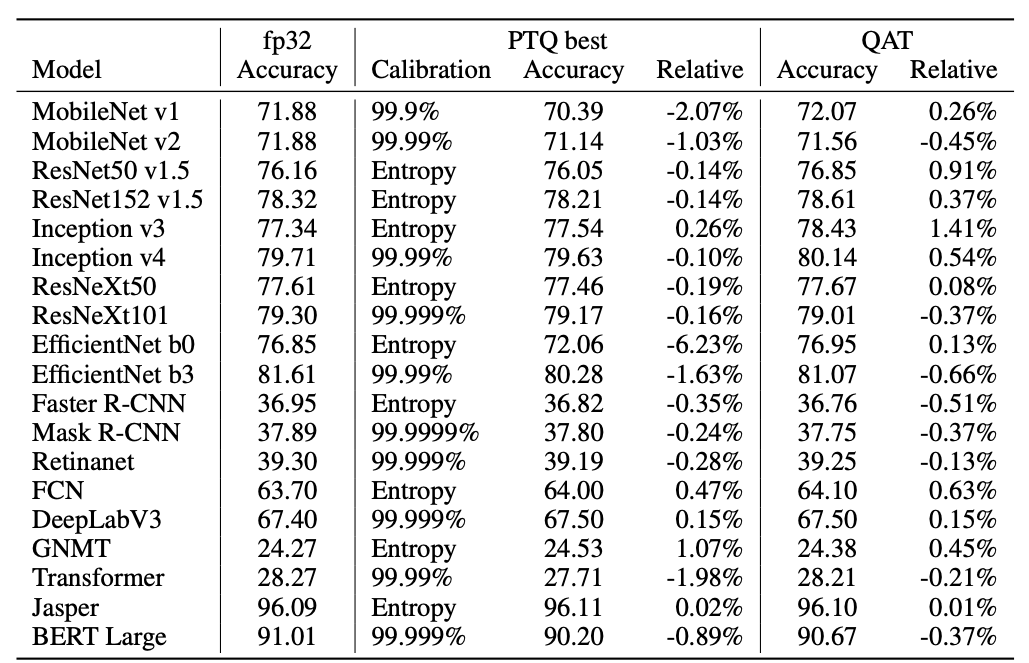

- Channelwise Quantization: This popular method uses a fixed range for each convolutional filter that is independent of other channels. Because each channel is assigned a dedicated scaling factor, this method ensures a higher quantization resolution and often results in higher accuracy.